Real-Time Stream Processing With Apache Kafka Part 3: Setup a Single Node Kafka Cluster

Create a single node Kafka Cluster with ZooKeeper.

Join the DZone community and get the full member experience.

Join For FreeIn the first article, we introduced Apache Kafka, and in the previous article, we discussed the Kafka Streams API. In this article, we will learn how to set up a single node Kafka Cluster on our local machine.

Environment

Below is the environment I used for the setup.

Apache Kafka (kafka_2.12-2.1.0).

Apache ZooKeeper (ZooKeeper-3.4.12).

Windows Operating System (with commands given for Linux).

The steps mentioned should be valid with other versions as well without much change. Please refer to the official documentation if you have any issues.

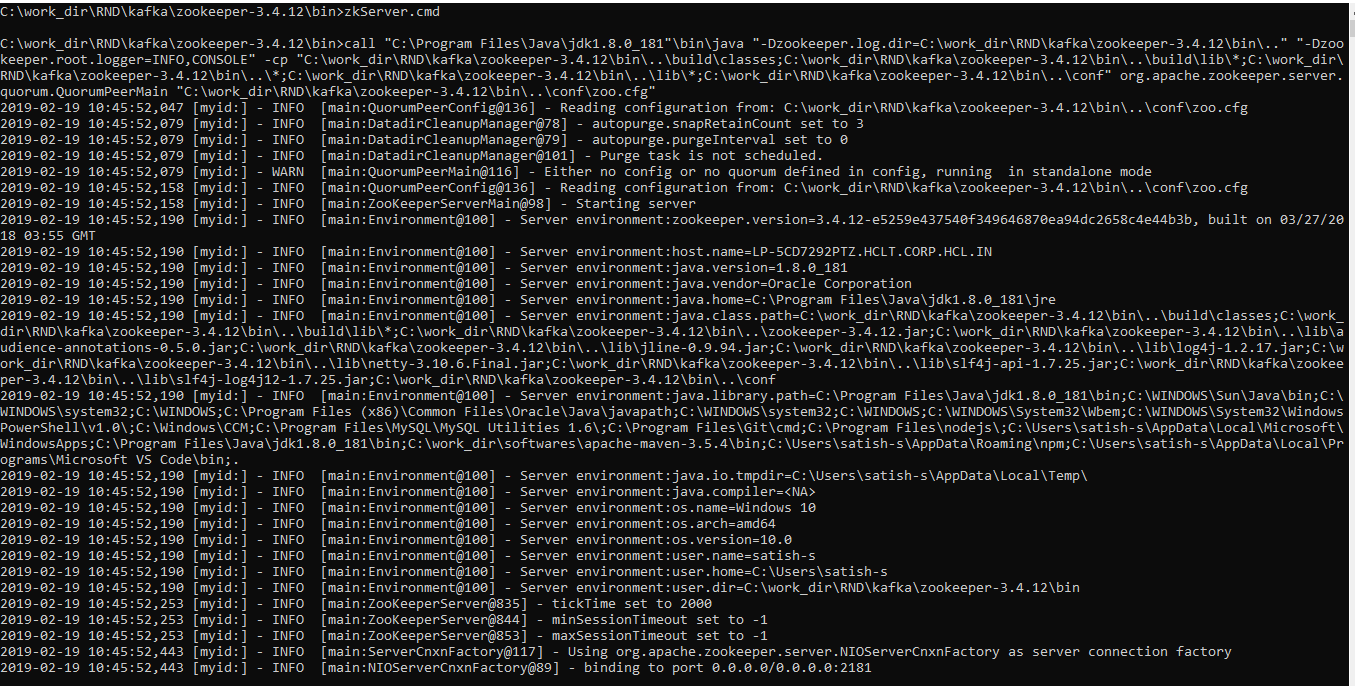

Set Up ZooKeeper

Kafka needs ZooKeeper to run. So, first, we need to set up and start ZooKeeper. There are two options for setting up ZooKeeper.

For quick and easy setup, Kafka is distributed bundled with Zookeeper. You can get a quick-and-dirty single-node Zookeeper instance. The convenience script is there in the bin folder of the Kafka distribution.

Linux -> bin/zookeeper-server-start.sh config/zookeeper.properties

Windows -> bin\windows\zookeeper-server-start.bat <Path to config file>You can also download the full-fledged ZooKeeper distributions from the download page of the official release, Un-tar the distribution, and start a ZooKeerp server.

Linux -> bin/zkServer.sh <Path to config file>

Windows -> bin\zkServer.cmd <Path to config file>

Set Up Kafka

Download Kafka from the official release downloads page and un-tar the distribution (on windows use utilities like 7zip).

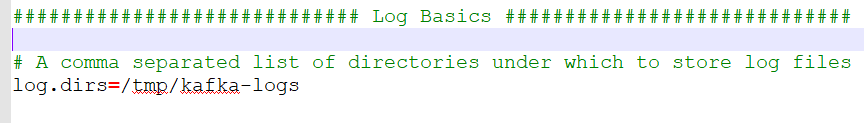

tar -xzf kafka_2.11-2.1.0.tgzEdit the file "server.properties" present in "config" directory. You can create a copy of the file as well. You need to update the property "log.dirs" to a location where the logs shall be persisted on the file system.

"log.dirs" = <Folder location to be used for persisting logs>

For example:

Linux -> log.dirs=/tmp/kafka-logs

Windows -> log.dirs= C:\tmp

Start the server by following command in the bin directory

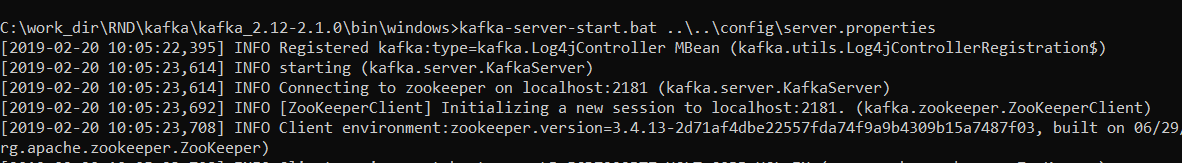

Linux -> bin/kafka-server-start.sh config\server.properties

Windows -> kafka-server-start.bat ..\..\config\server.propertiesIf your configuration files are valid, then Kafka will start at pre-configured port. Port 9092 is the default port.

Test Your Created Cluster

Create a topic in Kafka

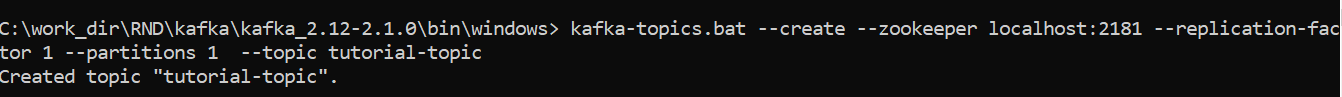

Use the following command to create a topic.

kafka-topics.sh --create --zookeeper <zookeeper_host>:<zookeeper_port> --replication-factor <factor> --partitions <partition> --topic <topic-name>Linux -> bin/kafka-topics.sh --create --zookeeper localhost:2181 --replication-factor 1 --partitions 1 --topic tutorial-topic

Windows -> bin\windows\kafka-topics.bat --create --zookeeper localhost:2181 --replication-factor 1 --partitions 1 --topic tutorial-topicType the message that you want to publish to the Kafka topic

This will create a topic named "tutorial-topic" with the replication of one.

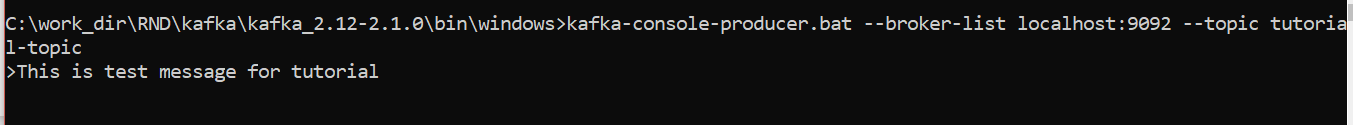

Send Records to a Newly Created Topic

We will use the Kafka console producer to send some messages to the topic we created in the earlier step.

Linux -> bin/kafka-console-producer.sh --broker-list localhost:9092 --topic tutorial-topic

Windows -> bin\windows\kafka-console-producer.bat --broker-list localhost:9092 --topic tutorial-topic

<type your message>

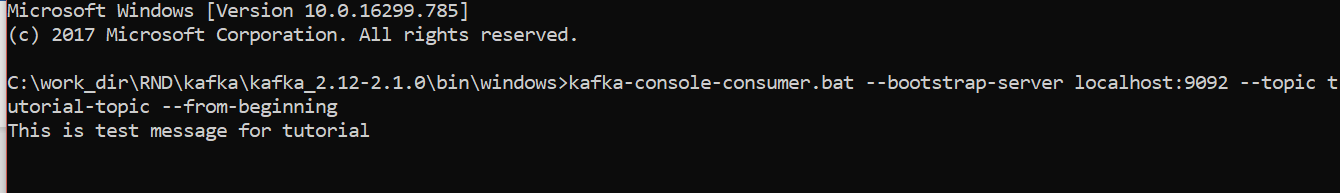

Consume Records Using Console Consumer

For quick testing, let's start a handy console consumer, which reads messages from a specified topic and displays them back on the console. We will use the same to consumer to read all of our messages from this point forward. Use the following command:

Linux -> bin/kafka-console-consumer.sh --bootstrap-server localhost:9092 --topic tutorial-topic --from-beginning

Windows -> bin\windows\kafka-console-consumer.bat --bootstrap-server localhost:9092 --topic tutorial-topic --from-beginning

If your settings are valid, then you should be able to see the messages that you publish to the topic.

In this article, we have set up a single node Kafka Cluster on our local machine. In the next article, we will discuss a use case for real-time analytics using Kafka streams.

Please share any comments/feedback you may have!

Opinions expressed by DZone contributors are their own.

Comments