Go Microservices, Part 11: Hystrix and Resilience

Learn how to set up more resilient inter-service communication for your microservices written with Go using the Hystrix circuit breaker.

Join the DZone community and get the full member experience.

Join For Freein this part of the go microservices blog series, we’ll explore how we can make our inter-service communication resilient using the circuit breaker pattern using a go implementation of netflix hystrix and the retries package of go-resilience .

contents

- overview

- the circuit breaker

- resilience through retrier

- landscape overview

- go code - adding circuit breaker and retrier

- deploy & run

- hystrix dashboard and netflix turbine

- turbine & service discovery

- summary

source code

the finished source can be cloned from github:

> git clone https://github.com/callistaenterprise/goblog.git

> git checkout p11

1. overview

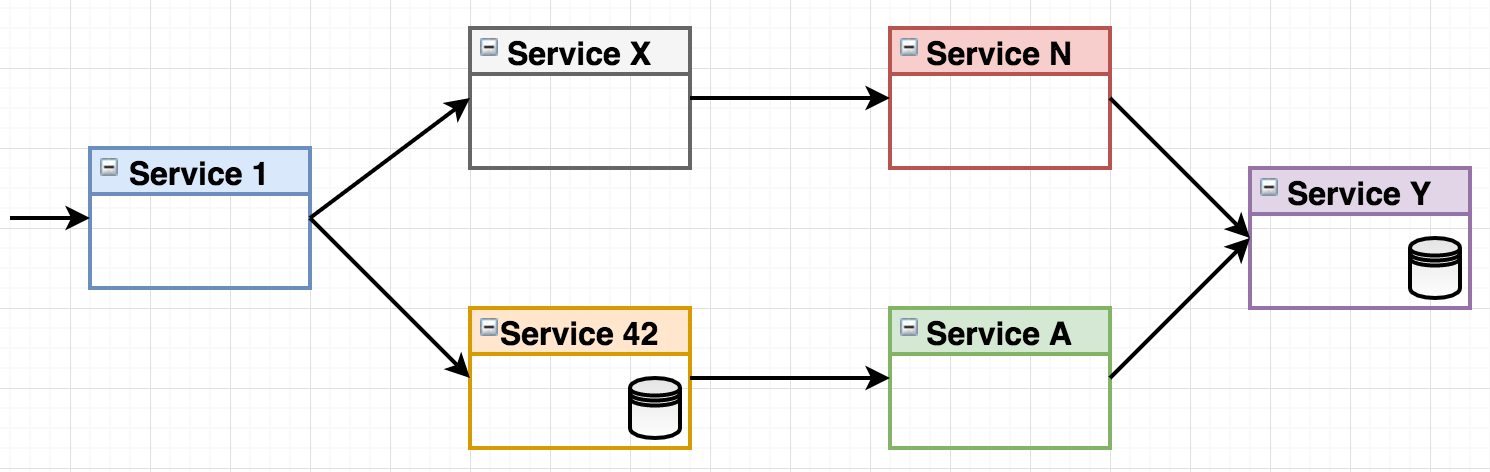

consider the following make-believe system landscape where a number of microservices handle an incoming request:

figure 1 - system landscape.

figure 1 - system landscape.

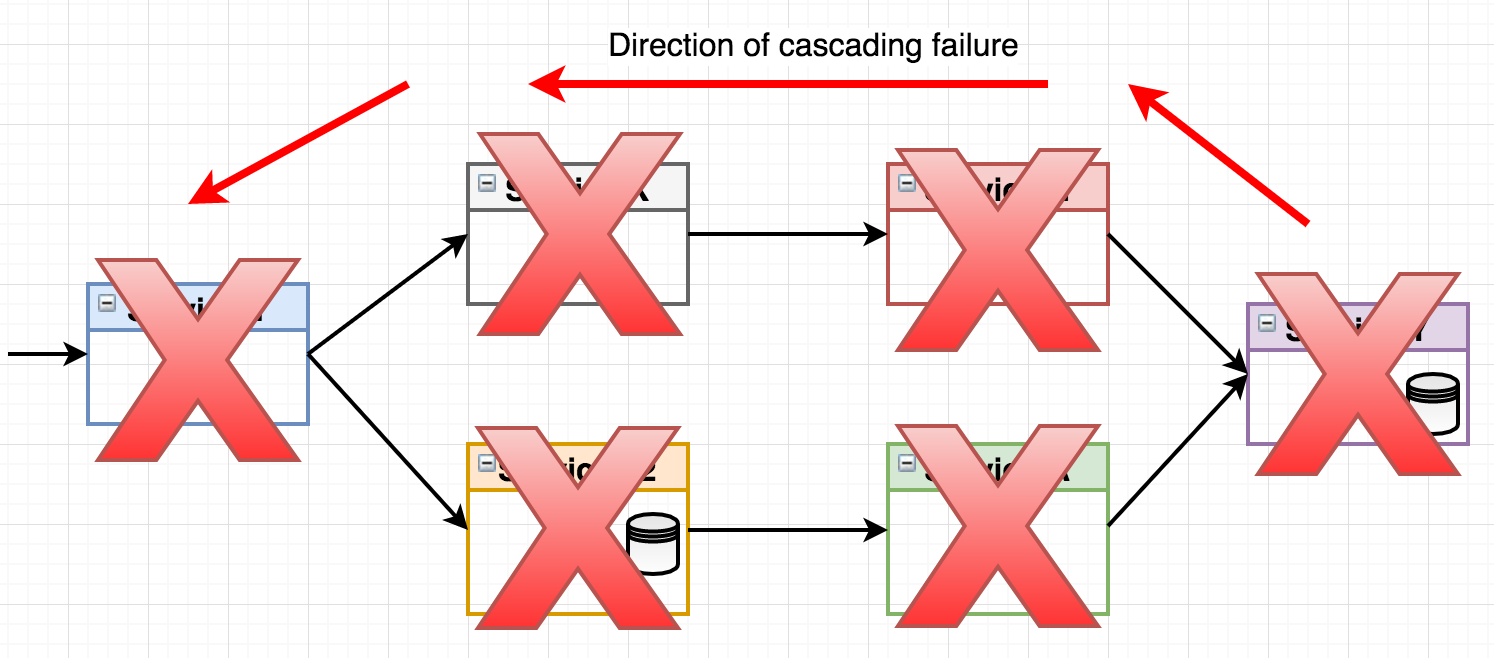

what happens if the right-most service “service y” fails? let’s say it will accept incoming requests but then just keep them waiting, perhaps the underlying data storage isn’t responsive. the waiting requests of the consumer services (service n & service a) will eventually time out, but if you have a system handling tens or hundreds of requests per second, you’ll have thread pools filling up, memory usage skyrocketing and irritated end consumers (those who called service 1) waiting for their response. this may even cascade through the call chain all the way back to the entry point service, effectively grinding your entire landscape to a halt.

figure 2 - cascading failure.

figure 2 - cascading failure.

while a properly implemented healthcheck will eventually trigger a service restart of the failing service through mechanisms in the container orchestrator, that may take several minutes. meanwhile, an application under heavy load will suffer from cascading failures unless we’ve actually implemented patterns to handle this situation. this is where the circuit breaker pattern comes in.

2. the circuit breaker

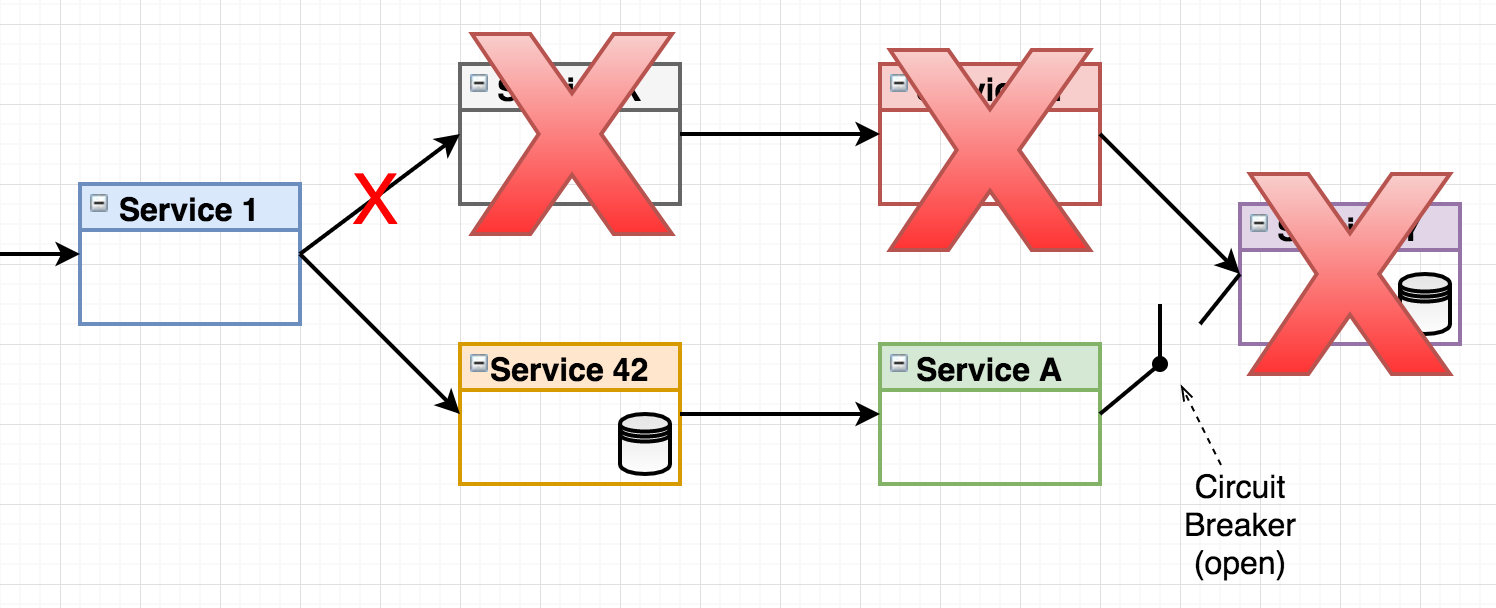

figure 3 - circuit breaker.

figure 3 - circuit breaker.

here we see how a circuit breaker logically exists between service a and service y (the actual breaker is always implemented in the consumer service). the concept of the circuit breaker comes from the domain of electricity. thomas edison filed a patent application back in 1879. the circuit breaker is designed to open when a failure is detected, making sure cascading side effect such as your house burning down or microservices crashing doesn’t happen. the hystrix circuit breaker basically works like this:

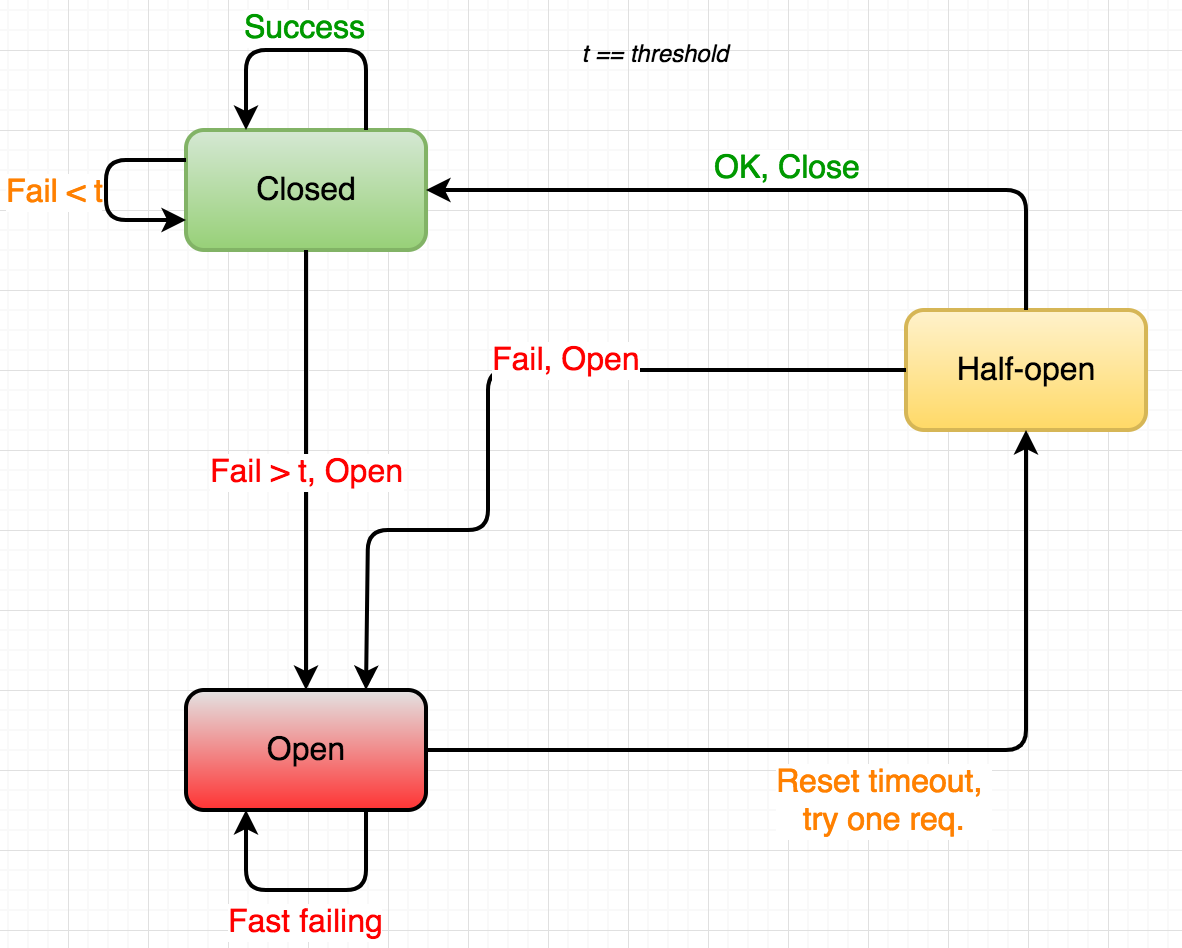

figure 4 - circuit breaker states.

figure 4 - circuit breaker states.

2.1 states

- closed: in normal operation, the circuit breaker is closed , letting requests (or electricity) pass through.

- open: whenever a failure has been detected (n number of failed requests within a time span, request(s) taking too long, a massive spike of current), the circuit opens , making sure the consumer service short-circuits instead of waiting for the failing producer service.

- half-open: periodically, the circuit breaker lets a request pass through. if successful, the circuit can be closed again, otherwise, it stays open.

there are two key takeaways with hystrix when the circuit is closed:

- hystrix allows us to provide a fallback function that will be executed instead of running the normal request. this allows us to provide a fallback behavior. sometimes, we can’t do without the data or service of the broken producer - but just as often, our fallback method can provide a default result, a well-structured error message or perhaps calling a backup service.

- stopping cascading failures. while the fallback behavior is very useful, the most important part of the circuit breaker pattern is that we’re immediately returning some response to the calling service. no thread pools filling up with pending requests, no timeouts, and hopefully fewer annoyed end-consumers.

3. resilience through retrier

the circuit breaker makes sure that if a given producer service goes down, we can both handle the problem gracefully and save the rest of the application from cascading failures. however, in a microservice environment we seldom only have a single instance of a given service. why consider the first attempt as a failure inside the circuit breaker if you have many instances where perhaps just a single one has problems? this is where the retrier comes in:

in our context - using go microservices within a docker swarm mode landscape - if we have let’s say 3 instances of a given producer service, we know that the swarm load-balancer will automatically round-robin requests addressed to a given service . so instead of failing inside the breaker, why not have a mechanism that automatically performs a configurable number of retries including some kind of backoff?

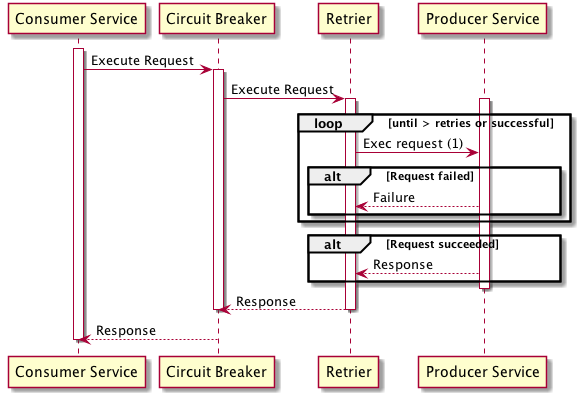

figure 5 - retrier.

figure 5 - retrier.

perhaps somewhat simplified - the sequence diagram should hopefully explain the key concepts:

- the retrier runs inside the circuit breaker.

- the circuit breaker only considers the request failed if all retry attempts failed. actually, the circuit breaker has no notion of what’s going on inside it - it only cares about whether the operation it encapsulates returns an error or not.

in this blog post, we’ll use the retries package of go-resilience .

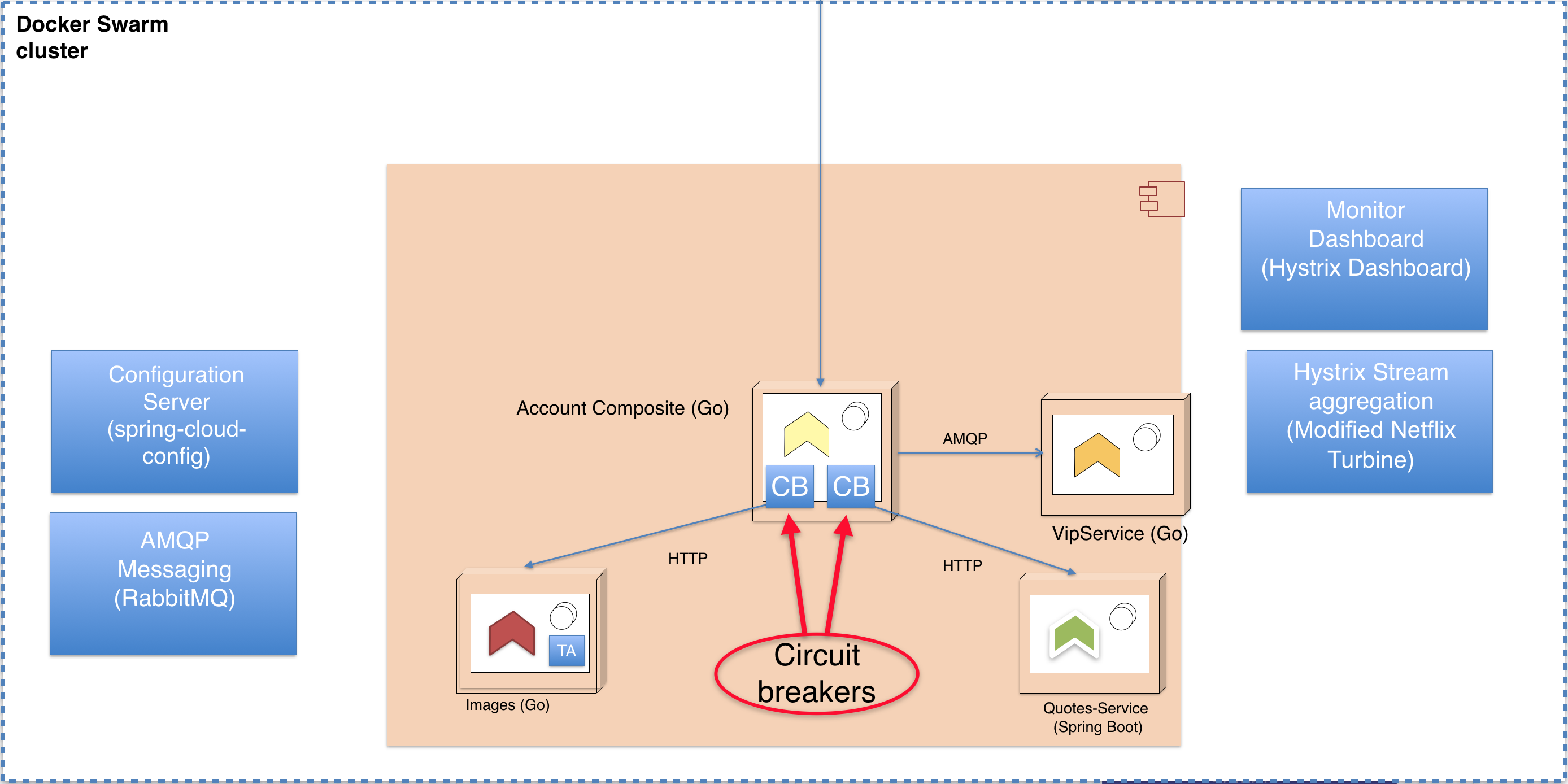

4. landscape overview

in this blog post and the example code we’re going to implement later, we’ll add circuit breakers to the accountservice for its outgoing calls to the quotes-service and a new service called imageservice . we will also install services running the netflix hystrix monitoring dashboard and netflix turbine hystrix stream aggregator. more on those two later.

figure 6 - landscape overview.

figure 6 - landscape overview.

5. go code - adding circuit breaker and retrier

finally time for some go code! in this part we’re introducing a brand new underlying service, the imageservice . however, we won’t spend any precious blog space describing it. it will just return an url for a given “acountid” along with the ip-address of the serving container. it provides a bit more complexity to the landscape which is suitable for showcasing how we can have multiple named circuit breakers in a single service.

let’s dive into our “accountservice” and the /goblog/accountservice/service/handlers.go file. from the code of the getaccount func, we want to call the underlying quotes-service and the new imageservice using go-hystrix and go-resilience/retrier. here’s the starting point for the quotes-service call:

func getquote() (model.quote, error) {

body, err := cb.callusingcircuitbreaker("quotes-service", "http://quotes-service:8080/api/quote?strength=4", "get")

// code handling response or err below, omitted for clarity

...

}5.1 circuit breaker code

the cb.callusingcircuitbreaker func is something i’ve added to our /common/circuitbreaker/hystrix.go file. it’s a bit on the simplistic side, but basically wraps the go-hystrix and retries libraries. i’ve deliberately made the code more verbose and non-compact for readability reasons.

func callusingcircuitbreaker(breakername string, url string, method string) ([]byte, error) {

output := make(chan []byte, 1) // declare the channel where the hystrix goroutine will put success responses.

errors := hystrix.go(breakername, // pass the name of the circuit breaker as first parameter.

// 2nd parameter, the inlined func to run inside the breaker.

func() error {

// create the request. omitted err handling for brevity

req, _ := http.newrequest(method, url, nil)

// for hystrix, forward the err from the retrier. it's nil if successful.

return callwithretries(req, output)

},

// 3rd parameter, the fallback func. in this case, we just do a bit of logging and return the error.

func(err error) error {

logrus.errorf("in fallback function for breaker %v, error: %v", breakername, err.error())

circuit, _, _ := hystrix.getcircuit(breakername)

logrus.errorf("circuit state is: %v", circuit.isopen())

return err

})

// response and error handling. if the call was successful, the output channel gets the response. otherwise,

// the errors channel gives us the error.

select {

case out := <-output:

logrus.debugf("call in breaker %v successful", breakername)

return out, nil

case err := <-errors:

return nil, err

}

} as seen above, go-hystrix allows us to name circuit breakers, which we also can provide fine-granular configuration for given the names. do note that the hystrix.go func will execute the actual work in a new goroutine, where the result sometime later is passed through the unbuffered (e.g. blocking) output channel to the select code snippet, which will effectively block until either the output or errors channels recieves a message

5.2 retrier code

next, the callwithretries(…) func that uses the retrier package of go-resilience:

func callwithretries(req *http.request, output chan []byte) error {

// create a retrier with constant backoff, retries number of attempts (3) with a 100ms sleep between retries.

r := retrier.new(retrier.constantbackoff(retries, 100 * time.millisecond), nil)

// this counter is just for getting some logging for showcasing, remove in production code.

attempt := 0

// retrier works similar to hystrix, we pass the actual work (doing the http request) in a func.

err := r.run(func() error {

attempt++

// do http request and handle response. if successful, pass resp.body over output channel,

// otherwise, do a bit of error logging and return the err.

resp, err := client.do(req)

if err == nil && resp.statuscode < 299 {

responsebody, err := ioutil.readall(resp.body)

if err == nil {

output <- responsebody

return nil

}

return err

} else if err == nil {

err = fmt.errorf("status was %v", resp.statuscode)

}

logrus.errorf("retrier failed, attempt %v", attempt)

return err

})

return err

}

5.3 unit testing

i’ve created three unit tests in the /goblog/common/circuitbreaker/hystrix_test.go file which runs the callusingcircuitbreaker() func. we won’t go through all test code, one example should be enough. in this test we use gock to mock responses to three outgoing http requests, two failed and at last one successful:

func testcallusingresiliencelastsucceeds(t *testing.t) {

defer gock.off()

buildgockmatchertimes(500, 2) // first two requests respond with 500 server error

body := []byte("some response")

buildgockmatcherwithbody(200, string(body)) // next (3rd) request respond with 200 ok

hystrix.flush() // reset circuit breaker state

convey("given a call request", t, func() {

convey("when", func() {

// call single time (will become three requests given that we retry thrice)

bytes, err := callusingcircuitbreaker("test", "http://quotes-service", "get")

convey("then", func() {

// assert no error and expected response

so(err, shouldbenil)

so(bytes, shouldnotbenil)

so(string(bytes), shouldequal, string(body))

})

})

})

}the console output of the test above looks like this:

erro[2017-09-03t10:26:28.106] retrier failed, attempt 1

erro[2017-09-03t10:26:28.208] retrier failed, attempt 2

debu[2017-09-03t10:26:28.414] call in breaker test successful the other tests assert that hystrix fallback func runs if all retries fail and another test makes sure that the hhystrix circuit breaker is opened if a sufficient number of requests fail.

5.4 configuring hystrix

hystrix circuit breakers can be configured in a variety of ways. a simple example below where we specify the number of failed requests that should open the circuit and the retry timeout:

hystrix.configurecommand("quotes-service", hystrix.commandconfig{

sleepwindow: 5000,

requestvolumethreshold: 10,

})see the docs for details. my /common/circuitbreaker/hystrix.go “library” has some code for automatically trying to pick configuration values fetched from the config server using this naming convention:

hystrix.command.[circuit name].[config property] = [value]example: (in accountservice-test.yml )

hystrix.command.quotes-service.sleepwindow: 5000

6. deploy and run

in the git branch of this part, there’s updated microservice code and ./copyall.sh which builds and deploys the new imageservice . nothing new, really. so let’s take a look at the circuit breaker in action.

in this scenario, we’ll run a little load test that by default will run 10 requests per second to the /accounts/{accountid} endpoint.

> go run *.go -zuul=false(never mind that -zuul property, that’s for a later part of the blog series.)

let’s say we have 2 instances of the imageservice and quotes-service respectively. with all services running ok, a few sample responses might look like this:

{"name":"person_6","servedby":"10.255.0.19","quote":{"quote":"to be or not to be","ipaddress":"10.0.0.22"},"imageurl":"http://imageservice:7777/file/cake.jpg"}

{"name":"person_23","servedby":"10.255.0.21","quote":{"quote":"you, too, brutus?","ipaddress":"10.0.0.25"},"imageurl":"http://imageservice:7777/file/cake.jpg"}if we kill the quotes-service:

> docker service scale quotes-service=0we’ll see almost right away (due to connection refused) how the fallback function has kicked in and are returning the fallbackquote:

{name":"person_23","servedby":"10.255.0.19","quote":{"quote":"may the source be with you, always.","ipaddress":"circuit-breaker"},"imageurl":"http://imageservice:7777/file/cake.jpg"}

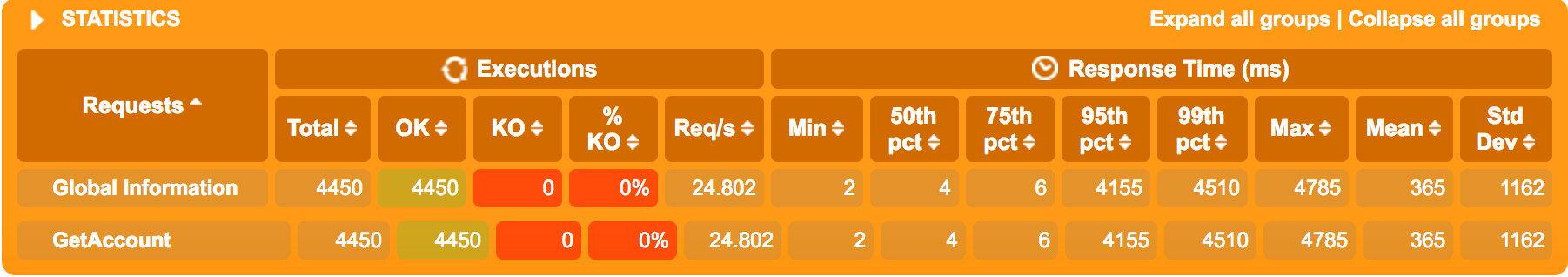

6.2 what happens under load?

what’s a lot more interesting is to see how the application as a whole reacts if the quote-service starts to respond really slowly. there’s a little “feature” in the quotes-service that allows us to specify a hashing strength when calling the quotes-service.

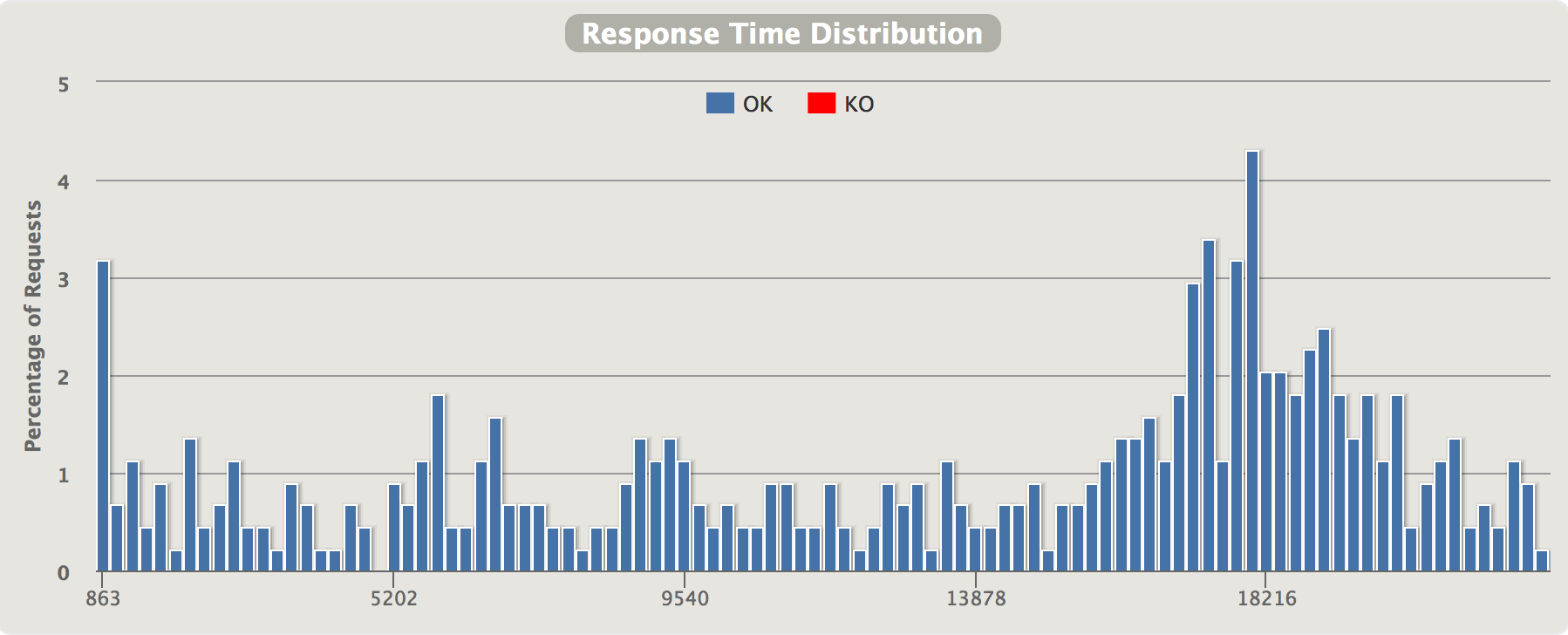

http://quotes-service:8080/api/quote?strength=4such a request is typically completed in about 10 milliseconds. by changing the strength query-param to ?strength=13 the quotes-service will use a lot of cpu and need slightly less than a second to complete. this is a perfect case for seeing how our circuit breaker reacts when the system comes under load and probably is getting cpu-starved. let’s use gatling for two scenarios - one where we’ve disabled the circuit breaker and one with the circuit breaker active.

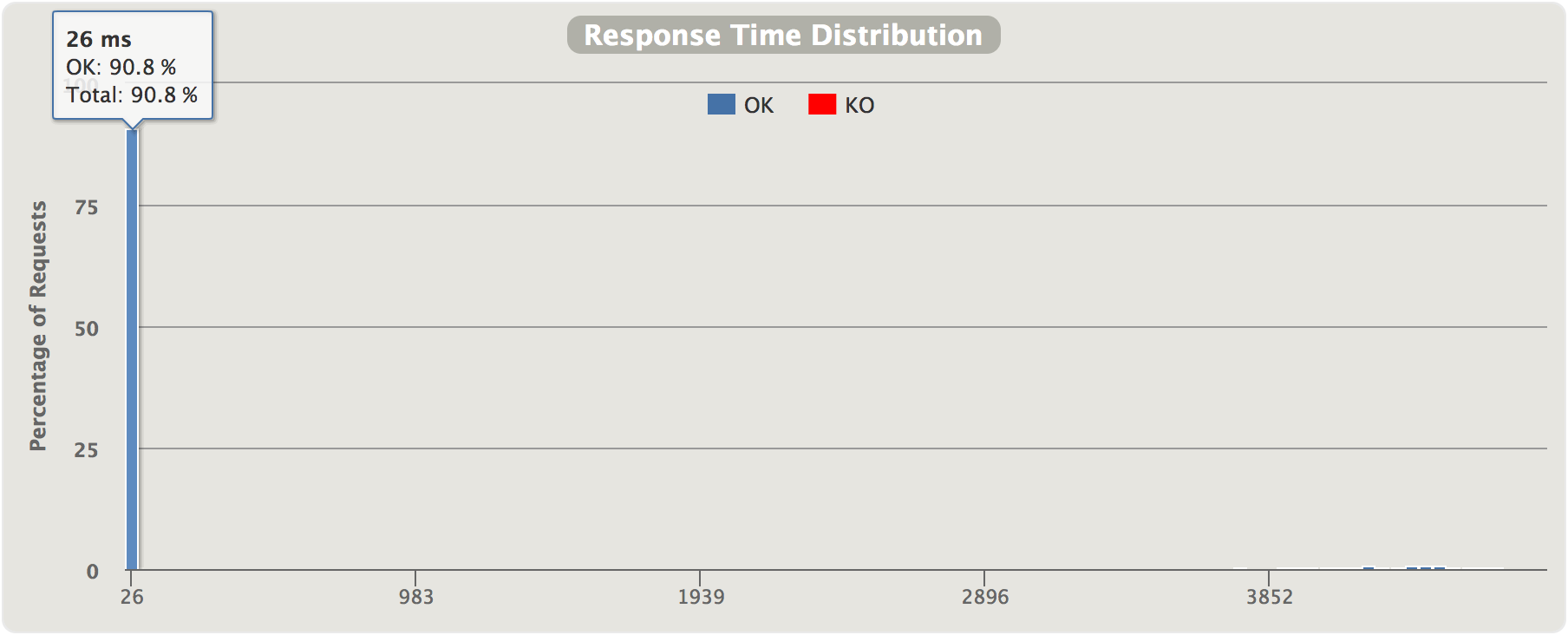

6.2.1 disabled circuit breaker

no circuit breaker, just using the standard

http.get(url string)

:

the very first request needs slightly less than a second, but then latencies increases, topping out at 15-20 seconds per request. peak throughput of our two quotes-service instances (both using 100% cpu) is actually not more than approx 3 req/s since they’re fully cpu-starved (and in all honesty - they’re both running on the same swarm node on my laptop having 2 cpu cores shared across all running microservices).

6.2.2 with circuit breaker

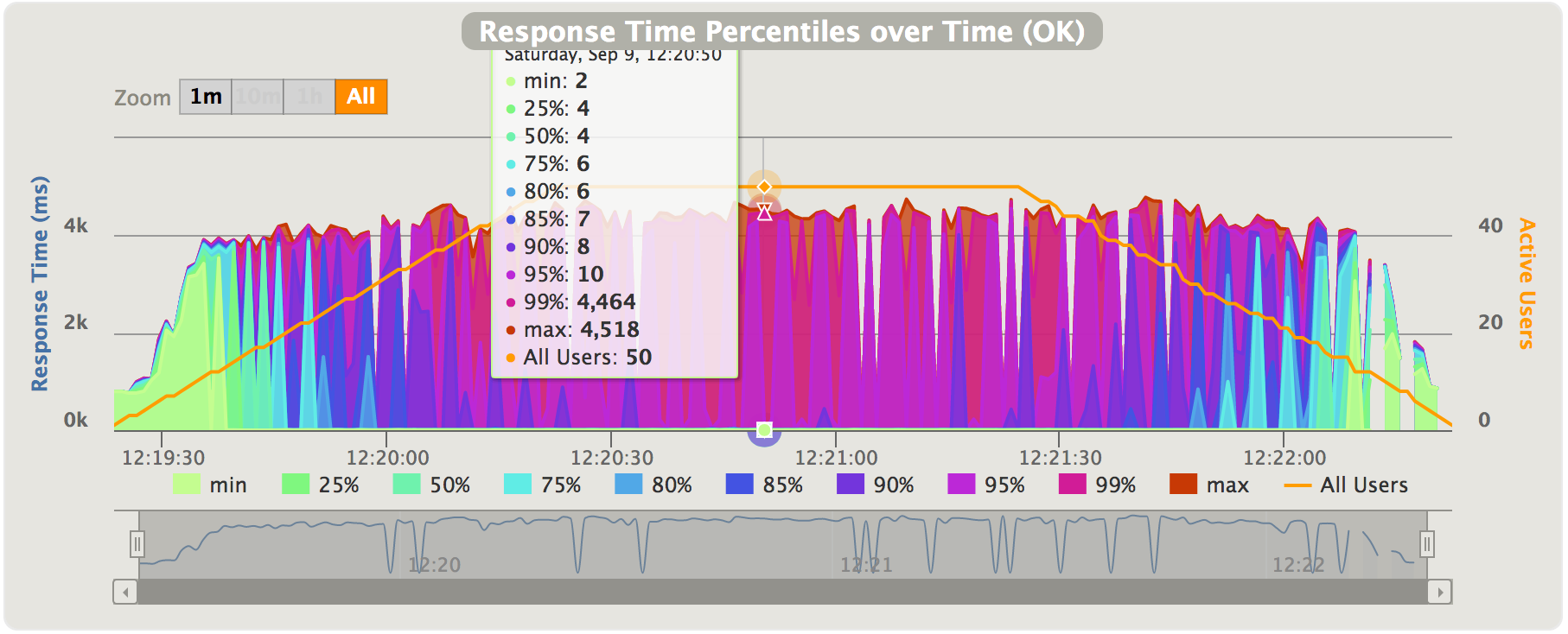

circuit breaker, with timeout set to 5000 ms, i.e. when enough requests have waited more than 5000 ms, the circuit will open and the fallback quote will be returned. (note the tiny bars around the 4-5 second mark on the far right - that’s requests from when the circuit was in “semi-open”-state and a few of the early requests before the circuit opened).

in this diagram, we see the distribution of response time halfway through the test. at the marked data point, the breaker is certainly open and the 95%th percentile is 10ms, while the 99%th percentile is over 4 seconds. in other words, about 95% of requests are handled within 10ms but a small percentage (probably half-open retries) are using up to 5 seconds before timing out.

in this diagram, we see the distribution of response time halfway through the test. at the marked data point, the breaker is certainly open and the 95%th percentile is 10ms, while the 99%th percentile is over 4 seconds. in other words, about 95% of requests are handled within 10ms but a small percentage (probably half-open retries) are using up to 5 seconds before timing out.

during the first 15 seconds or so, the greenish/yellowish part, we see that more or less all requests are linearly increasing latencies approaching the 5000 ms threshold. the behavior is - as expected - similar to when we were running without the circuit breaker. i.e. - requests can be successfully handled but takes a lot of time. then - the increasing latencies trip the breaker and we immediately see how response times drop back to a few milliseconds instead of ~5 seconds for the majority of the requests. as stated above, the breaker lets a request through every once in a while when in the “half-open” state. the two quotes-service instances can handle a few of those “half-open” requests, but the circuit will open again almost immediately since the quotes-service instances cannot serve more than a few req/s before the latencies get too high again and the breaker is tripped anew.

we see two neat things about circuit breakers in action here:

- the open circuit breaker keeps latencies to a minimum when the underlying quotes-service has a problem, it also “reacts” quite quickly - significantly faster than any healthcheck/automatic scaling/service restart will.

- the 5000 ms timeout of the breaker makes sure no user has to wait ~15 seconds for their response. the 5000 ms configured timeout takes care of that. (of course, you can handle timeouts in other ways than just using circuit breakers).

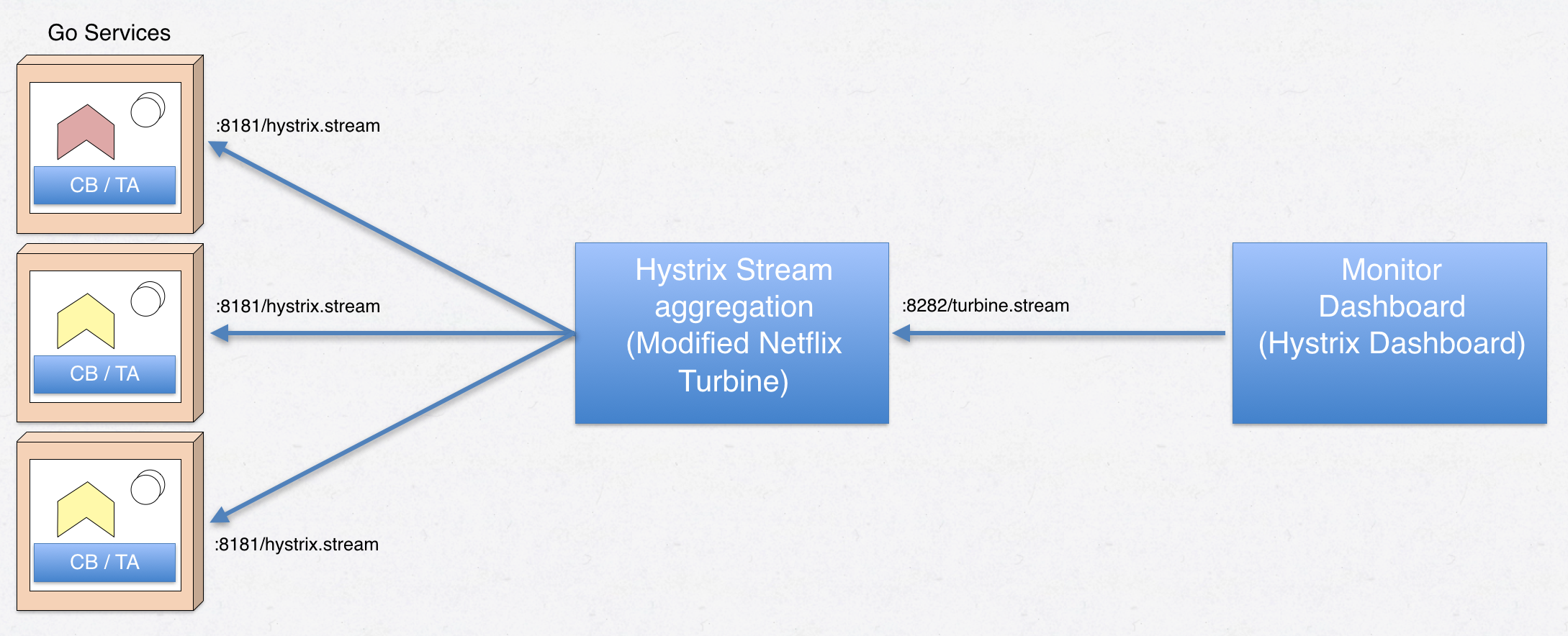

7. hystrix dashboard and netflix turbine

one neat thing about hystrix is that there’s a companion web application called hystrix dashboard that can provide a graphical representation of what’s currently going on in the circuit breakers inside your microservices.

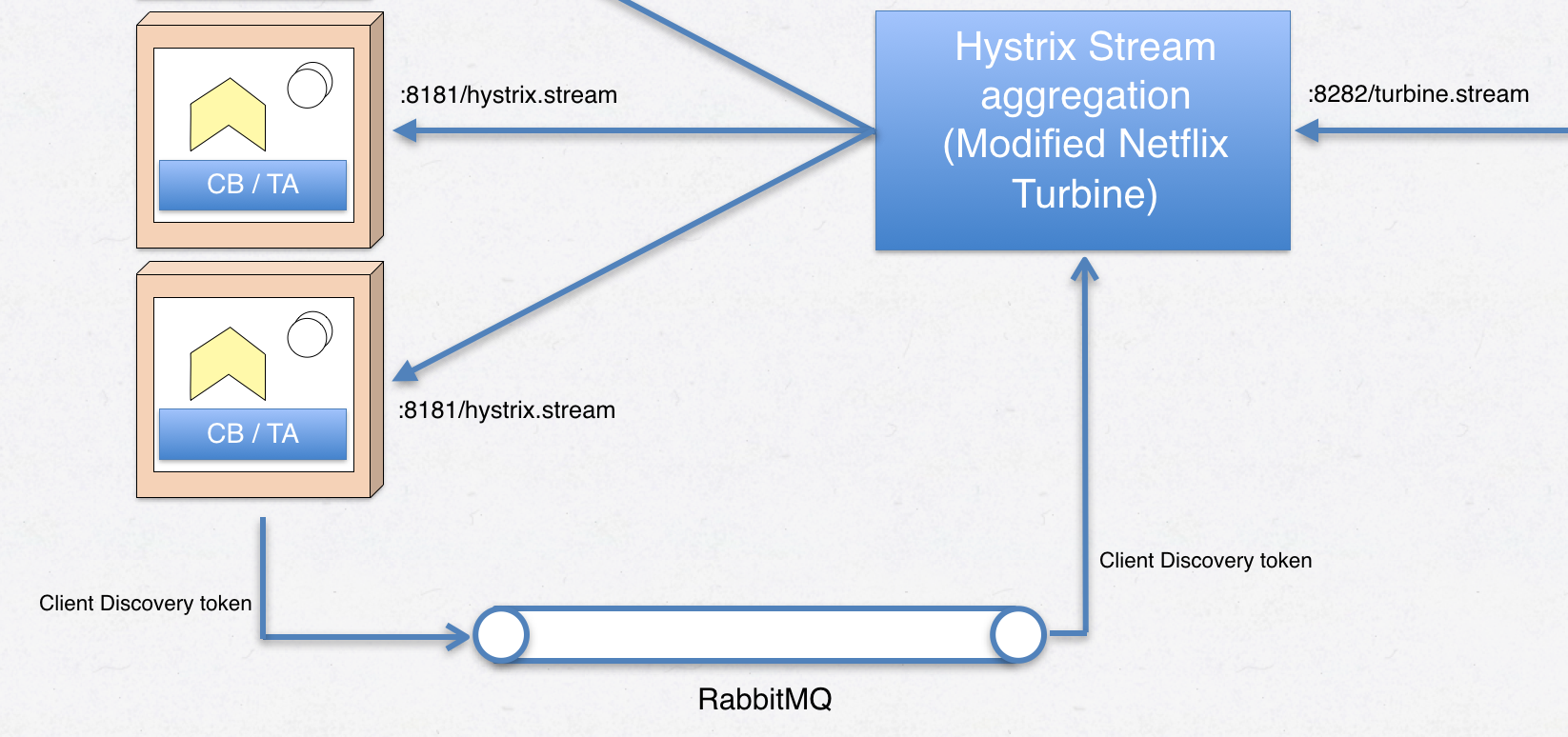

it works by producing http streams of the state and statistics of each configured circuit breaker updated once per second. the hystrix dashboard can however only read one such stream at a time and therefore netflix turbine exists - a piece of software that collects the streams of all circuit breakers in your landscape and aggregates those into one data stream the dashboard can consume:

figure 7 - service -> turbine -> hystrix dashboard relationship.

figure 7 - service -> turbine -> hystrix dashboard relationship.

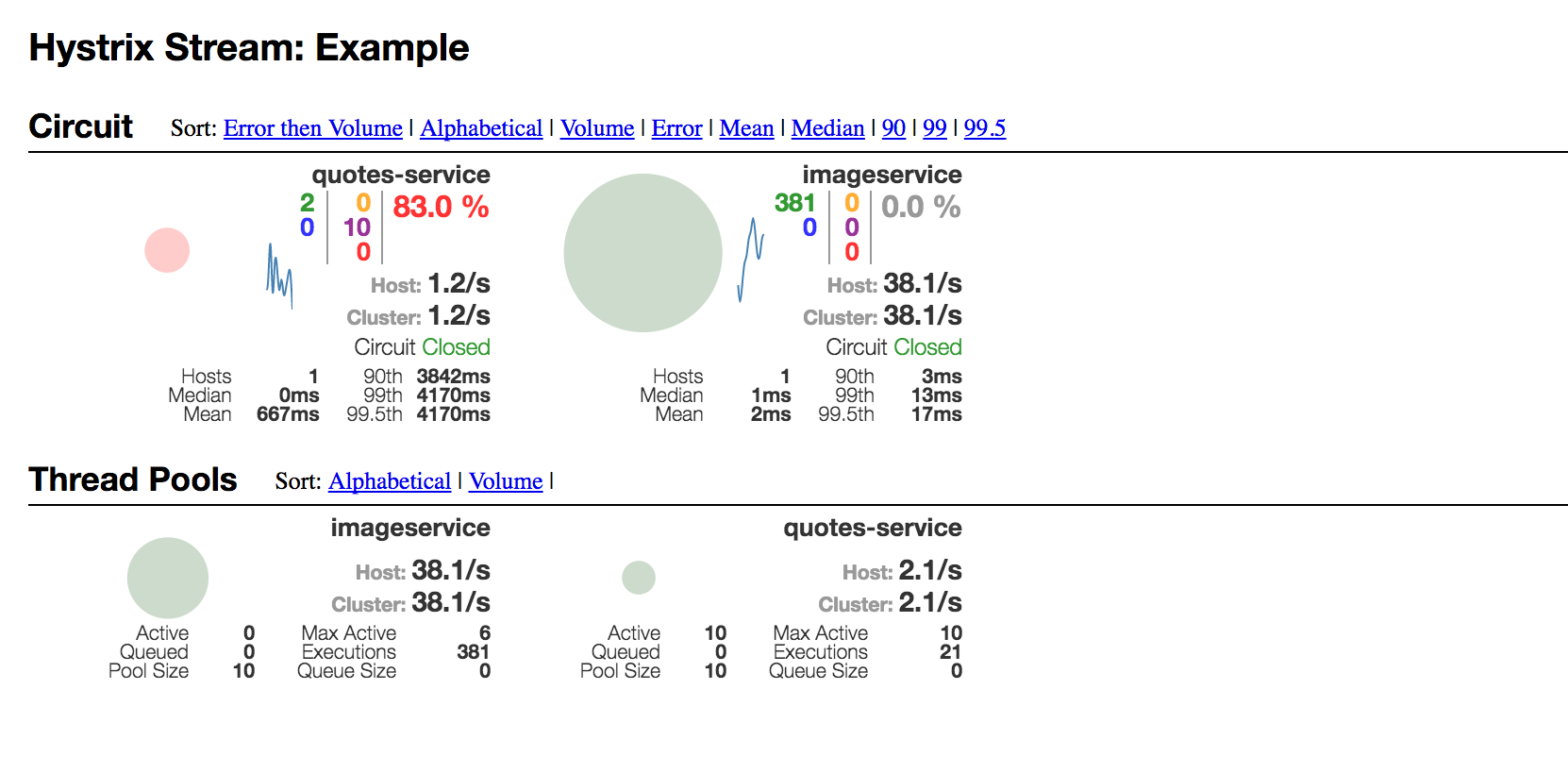

in figure 7, note that the hystrix dashboard requests the /turbine.stream from the turbine server, and turbine in its turn requests /hystrix.stream from a number of microservices. with turbine collecting circuit breaker metrics from our accountservice , the dashboard output may look like this:

figure 8 - hystrix dashboard.

figure 8 - hystrix dashboard.

the gui of hystrix dashboard is definitely not the easiest to grasp at first. above, we see the two circuit breakers inside accountservice and their state in the middle of one of the load-test runs above. for each circuit breaker, we see breaker state, req/s, average latencies, number of connected hosts per breaker name and error percentages. among things. there’s also a thread pools section below, though i’m not sure they work correctly when the root statistics producer is the go-hystrix library rather than a hystrix-enabled spring boot application. after all - we don’t really have the concept of thread pools in go when using standard goroutines.

here’s a short video of the “quotes-service” circuit breaker inside the accountservice when running part of the load-test used above: (click on the image to start the video)

all in all, turbine and hystrix dashboard provides a rather nice monitoring function that makes it quite easy to pinpoint unhealthy services or where unexpected latencies are coming from - in real time. always make sure your inter-service calls are performed inside a circuit breaker.

8. turbine and service discovery

there’s one issue with using netflix turbine & hystrix dashboard with non-spring microservices and/or container orchestrator based service discovery. the reason is that turbine needs to know where to find those /hystrix.stream endpoints, for example http://10.0.0.13:8181/hystrix.stream . in an ever-changing microservice landscape with services scaling up and down etc, there must exist mechanisms that make sure which urls turbine tries to connect to consume hystrix data streams.

by default, turbine relies on netflix eureka and that microservices are registering themselves with eureka. then, turbine can internally query eureka to get possible service ips to connect to.

in our context, we’re running on docker swarm mode and are relying on the built-in service abstraction docker in swarm mode provides for us. how do we get our service ips into turbine?

luckily, turbine has support for plugging in custom discovery mechanisms. i guess there are two options apart from doubling up and using eureka in addition to the orchestrator’s service discovery mechanism - something i thought was a pretty bad idea back in part 7 .

8.1.1 discovery tokens

this solution uses the amqp messaging bus (rabbitmq) and a “discovery” channel. when our microservices having circuit breakers start up, they figure out their own ip-address and then sends a message through the broker which our custom turbine plug-in can read and transform into something turbine understands.

figure 9 - hystrix stream discovery using messaging.

figure 9 - hystrix stream discovery using messaging.

the registration code that runs at accountservice startup:

func publishdiscoverytoken(amqpclient messaging.imessagingclient) {

// get hold of our ip adress (reads it from /etc/hosts) and build a discovery token.

ip, _ := util.resolveipfromhostsfile()

token := discoverytoken{

state: "up",

address: ip,

}

bytes, _ := json.marshal(token)

// enter an eternal loop in a new goroutine that sends the up token every

// 30 seconds to the "discovery" channel.

go func() {

for {

amqpclient.publishonqueue(bytes, "discovery")

time.sleep(time.second * 30)

}

}()

}full source of my little circuitbreaker library that wraps go-hystrix and go-resilience can be found here .

8.1.2. docker remote api

another option is to let a custom turbine plugin use the docker remote api to get hold of containers and their ip-addresses, which then can be transformed into something turbine can use. this should work too, but has some drawbacks such as tying the plugin to a specific container orchestrator as well as having run turbine on a docker swarm mode manager node.

8.2 the turbine plugin

the source code and some basic docs for the turbine plugin i’ve written can be found on my personal github page. since it’s java-based, i’m not going to spend precious blog space describing it in detail in this context.

you can also use a pre-built container image i’ve put on hub.docker.com . just launch as a docker swarm service .

8.3 running with option 1

an executable jar file and a dockerfile for the hystrix dashboard exists in /goblog/support/monitor-dashboard . the customized turbine is easiest used from my container image linked above.

8.3.1 building and running

i’ve updated my shell scripts to launch the custom turbine and hystrix dashboards. in springcloud.sh :

# hystrix dashboard

docker build -t someprefix/hystrix support/monitor-dashboard

docker service rm hystrix

docker service create --constraint node.role==manager --replicas 1 -p 7979:7979 --name hystrix --network my_network --update-delay 10s --with-registry-auth --update-parallelism 1 someprefix/hystrix

# turbine

docker service rm turbine

docker service create --constraint node.role==manager --replicas 1 -p 8282:8282 --name turbine --network my_network --update-delay 10s --with-registry-auth --update-parallelism 1 eriklupander/turbinealso, the accountservice dockerfile now exposes port 8181 so hystrix streams can be read from within the cluster. you shouldn’t map 8181 to a public port in your docker service create command.

8.3.2 troubleshooting

i don’t know if turbine is slightly buggy or what the matter is, but i tend to have to do the following for hystrix dashboard to pick up a stream from turbine:

- sometimes restart my turbine service, easiest done using docker service scale=0

- have some requests going through the circuit breakers. unsure if hystrix streams are produced by go-hystrix if there’s been no or no ongoing traffic passing through.

- making sure the url one enters into hystrix dashboard is correct. http://turbine:8282/turbine.stream?cluster=swarm works for me.

9. summary

in part 11 of the blog series, we’ve looked at circuit breakers and resilience and how those mechanisms can be used to build a more fault-tolerant and resilient system.

in the next part of the blog series, we’ll be introducing two new concepts: the zuul edge server and distributed tracing using zipkin and opentracing.

Published at DZone with permission of Erik Lupander, DZone MVB. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments