Using Global Cloud Load Balancer to Route User Requests to App Instances

A geo-distributed messenger application development journey continues: my next challenge is forwarding application requests to the instance closest to the user.

Join the DZone community and get the full member experience.

Join For FreeAhoy, Mateys!

In my last two articles, I showed you how to deploy microservice instances across several cloud regions and how to set up a geo-distributed API layer.

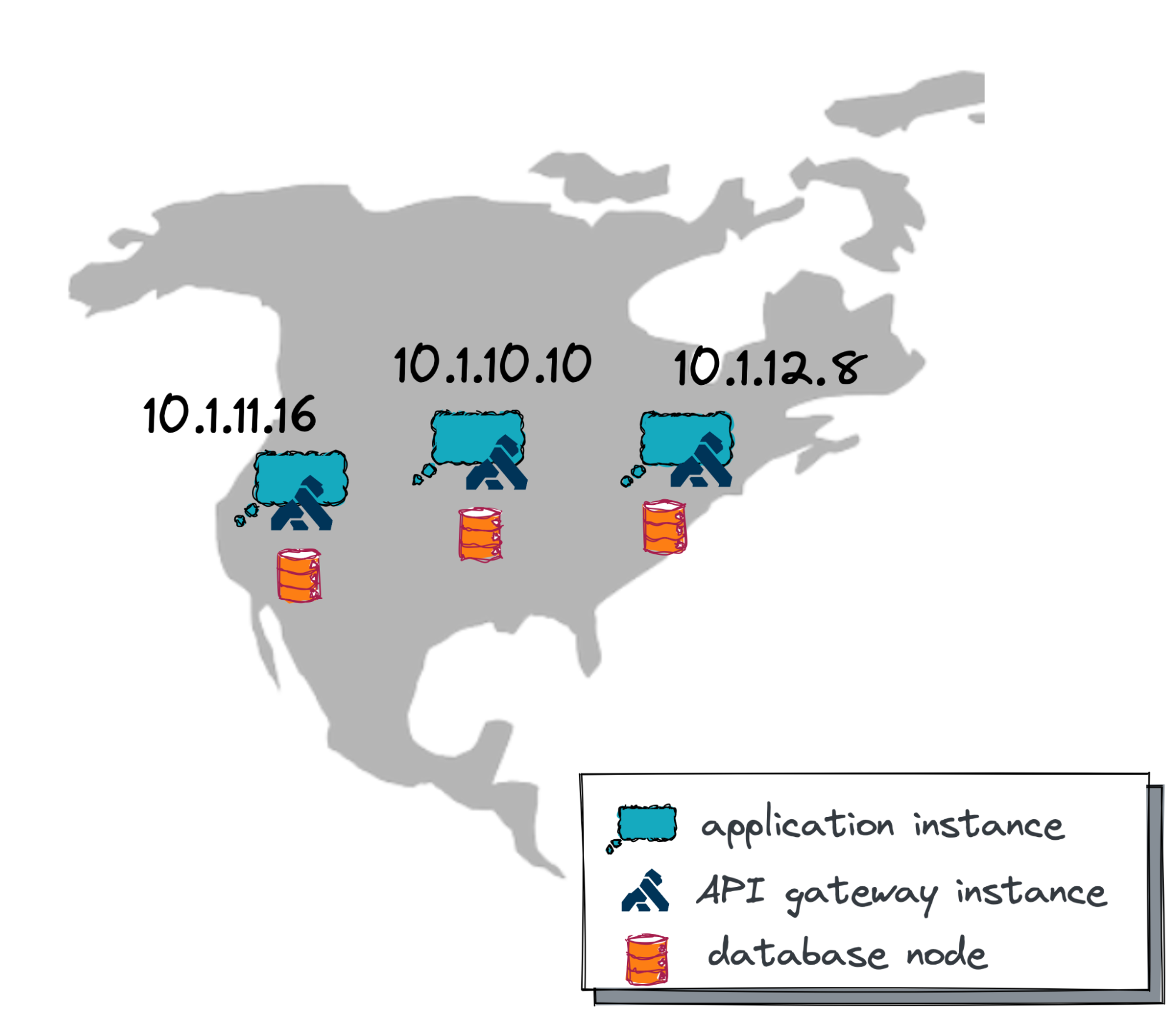

All seems good, except that I’ve now got several standalone application instances running across the US West, Central, and East regions, and each instance has its own private IP address.

My next challenge is figuring out how to forward application requests to the instances closest to the user.

I don’t want my Web UI and mobile apps to keep track of all the IPs and decide how and where to route user requests. This is way too complicated and becomes unmanageable. Instead, the Web UI and mobile apps need to connect to a single IP address, which lets other components of my geo-distributed solution decide where to route a particular user request.

What could those components be, matey?

You’ve guessed it: usually, a proxy and load balancer.

In this article, I’ll show you how to configure a global cloud load balancer that serves as both a proxy and a load balancer. This type of load balancer comes with a single IP address that can be accessed from any location on earth and can route a request to the nearest (active!) application instance.

So, if you’re still with me on this journey, then, as the pirates used to say, “Weigh Anchor and Hoist the Mizzen!” which means, “Pull up the anchor and get this ship sailing!”

Explaining Global Cloud Balancer Usage

Before diving into details and technicalities, let’s use a few illustrations to see the value of the load balancer.

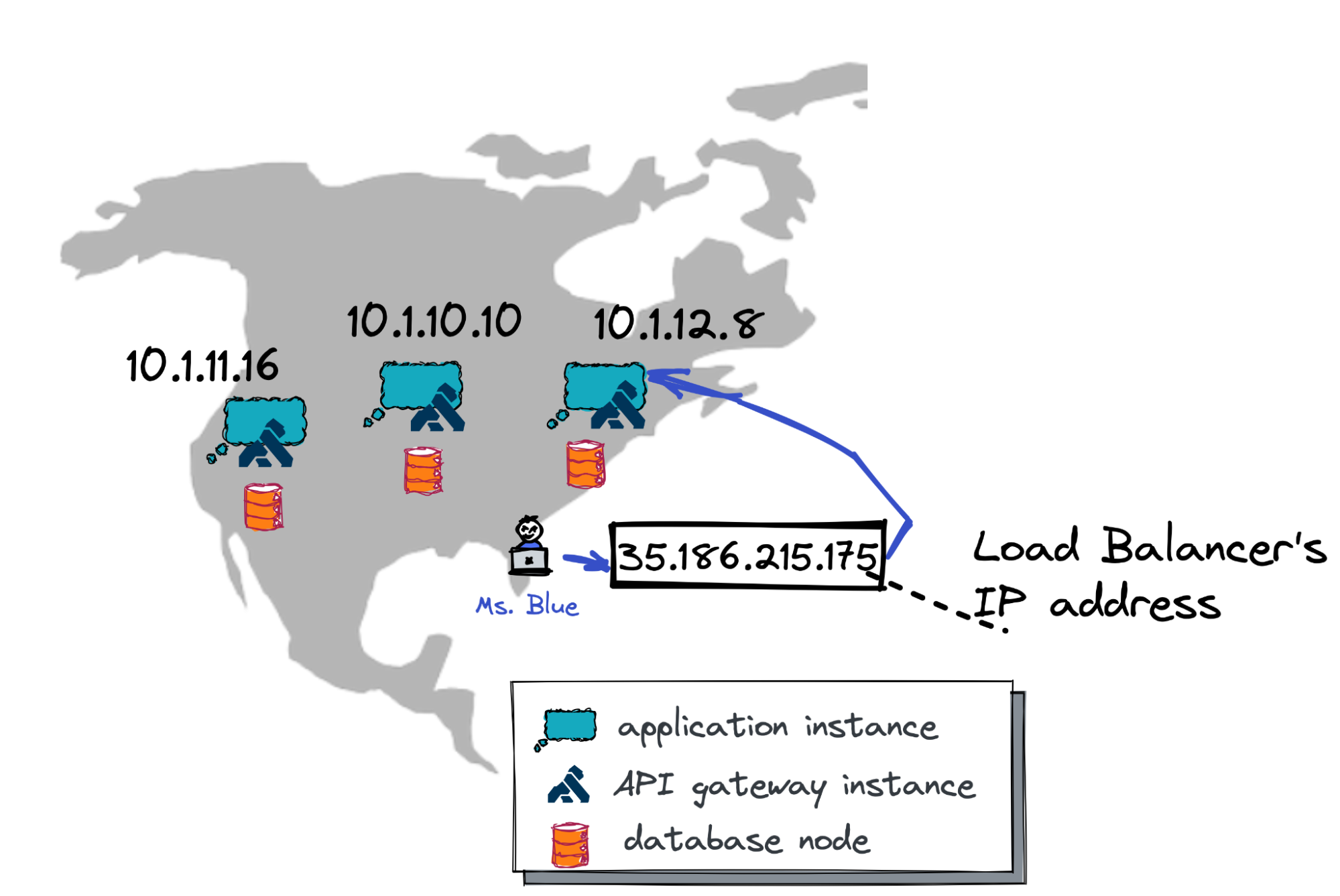

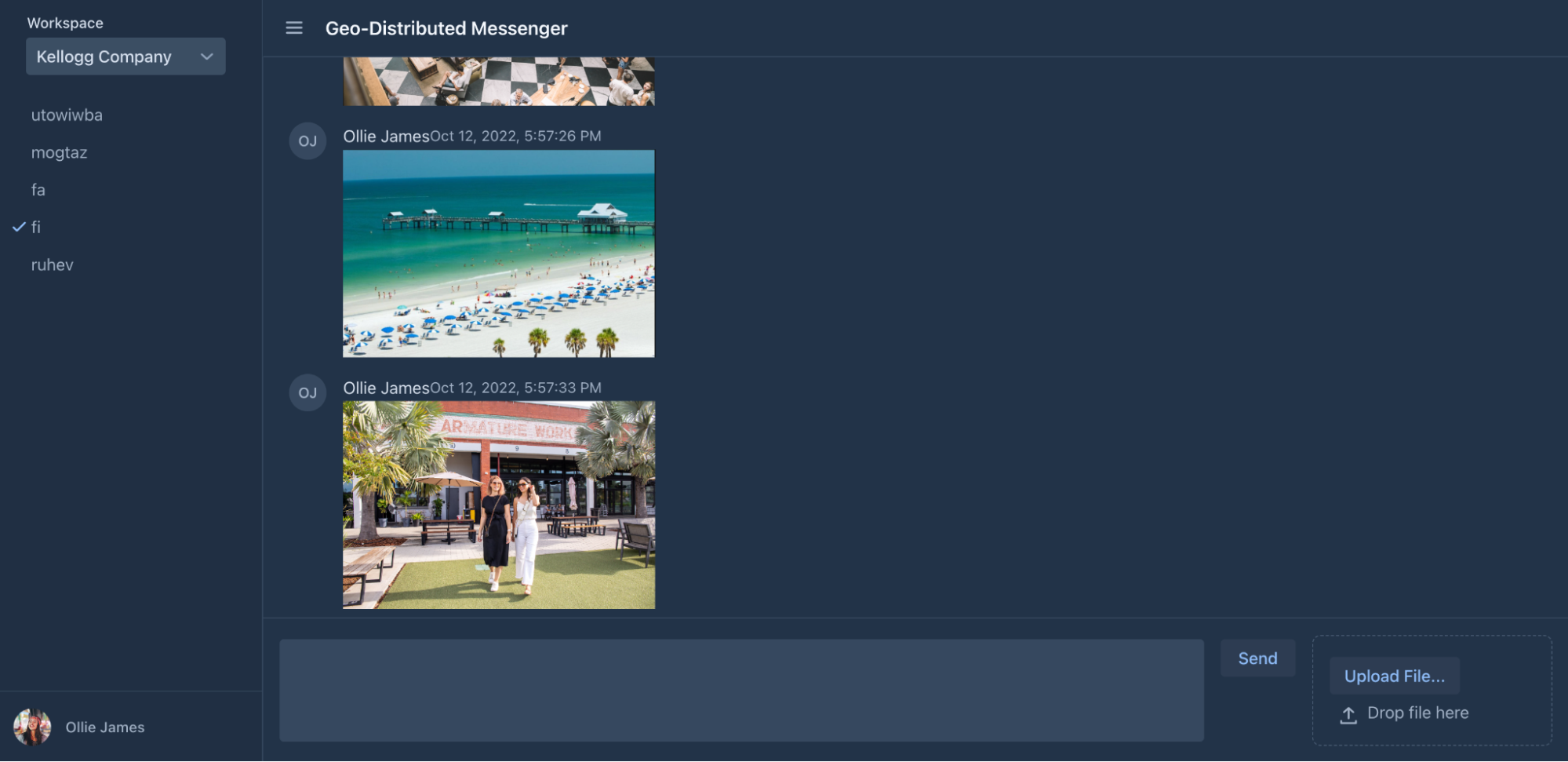

Suppose Ms. Blue, one of my application users, is vacationing in the Tampa Bay area of Florida. She promised not to open my corporate Slack-like messenger (the geo-distributed app I’m building) while on vacation.

She failed to keep her promise, but for a good reason. She couldn’t resist sharing a few pictures from the pristine beaches of Tampa Bay.

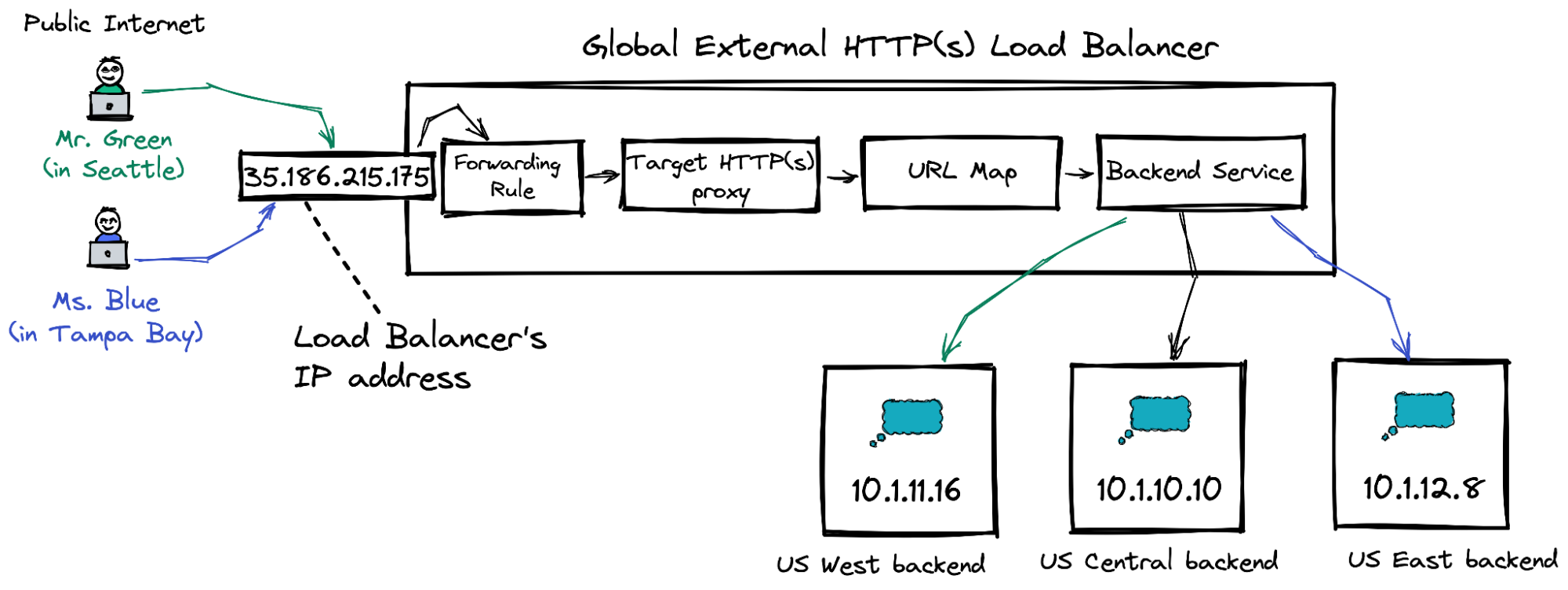

The diagram above shows what happens when Ms. Blue opens the app and sends a few gorgeous photos.

The app connects to the global load balancer's public IP address, 35.186.215.175. Next, the load balancer determines that the application instance with IP 10.1.12.8 is closest to Ms. Blue (both Ms. Blue and the instance are on the US East coast). Finally, the load balancer asks the East Coast instance to upload the pictures.

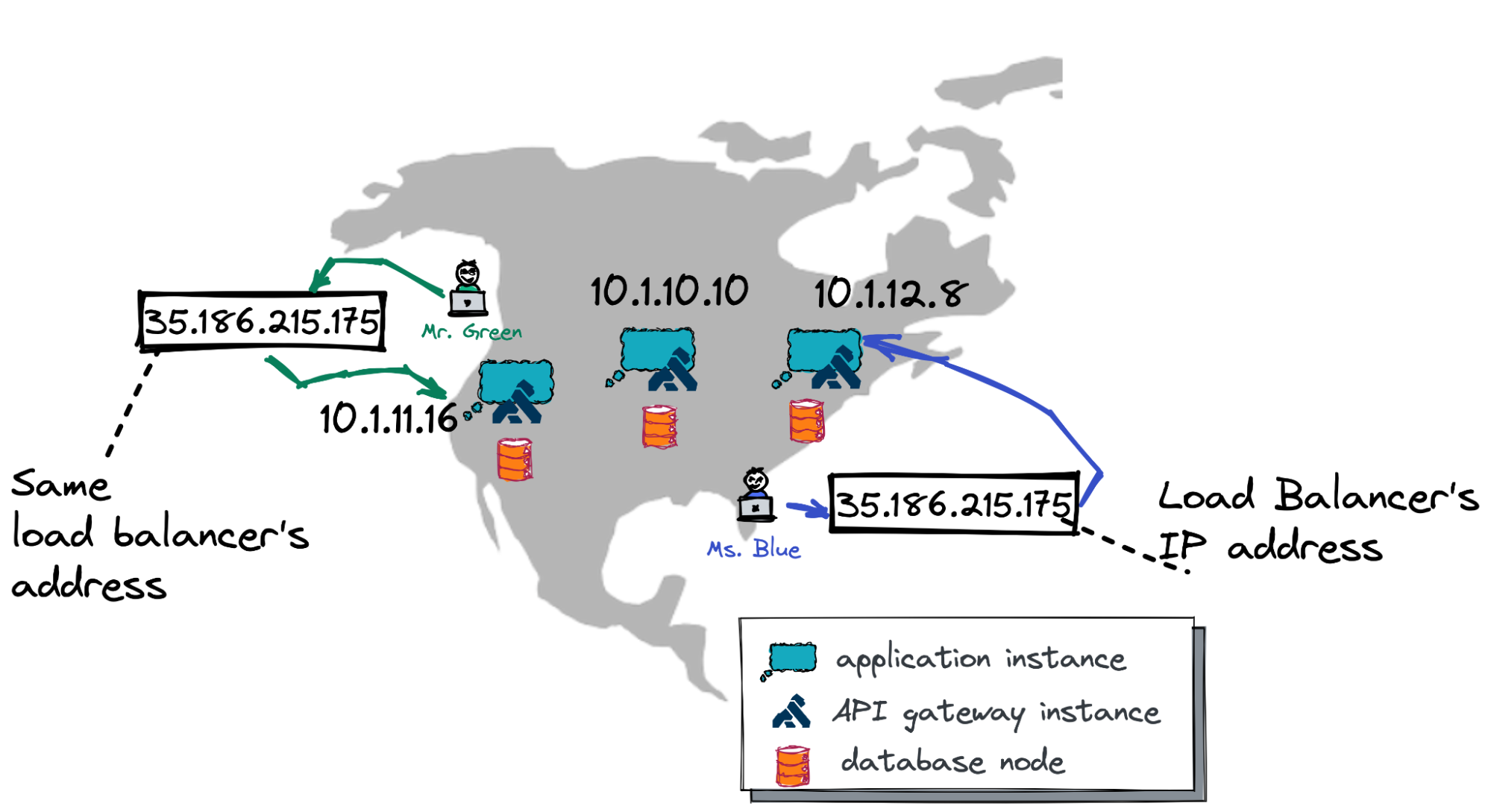

Next, Mr. Green, a colleague of Ms. Blue, working from his Seattle-based company office, receives a push notification from the messenger and can’t resist checking what’s been posted.

Mr. Green opens the mobile app and the app connects to the same load balancer using the same public IP address, 35.186.215.175. This time, the load balancer forwards Mr. Green’s request to the application instance from the West Coast that uses 10.1.11.16 IP address.

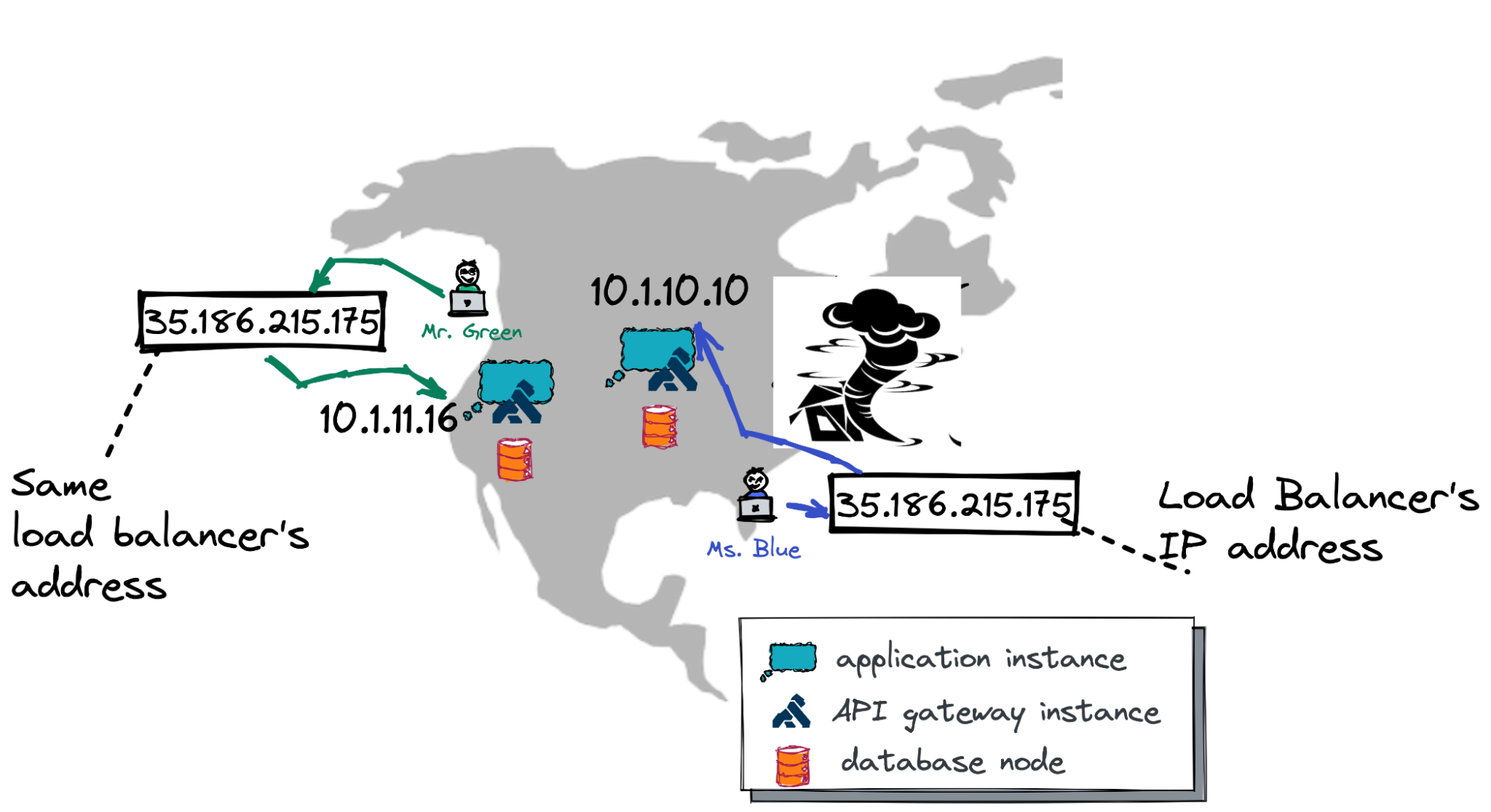

Also, the load balancer is instrumental in case of cloud outages. Let’s consider a final example.

A few days later, Ms. Blue decides to make her colleagues jealous again and opens the app to send another set of fabulous pictures. The application connects to the load balancer on the same public IP address (35.186.215.175) and…

…this time the load balancer forwards Ms. Blue’s request to the application instance in the US Central with IP address 10.1.10.10.

This is because a storm in the Atlantic impacted the availability of the entire US East Coast cloud region. The US Central region is up and running as normal, and is now the closest for Ms. Blue. So, the request is routed there automatically.

Alright, matey, now let’s move forward and look into the architecture, or the main building blocks, of the global cloud load balancer.

Global Load Balancer Architecture

The global load balancer is comprised of several building blocks. Take a look at the picture below and review the components from left to right.

This picture represents the architecture of the load balancer in Google Cloud. The components might vary depending on your cloud environment. But you can be sure that every major cloud environment supports the global load balancer.

So, moving from left to right in the picture: As you already know, users connect to the public IP address assigned to the load balancer (or DNS name that translates to this address). After that, the load balancer consults with existing forwarding rules to determine which target proxy needs to handle the request.

Once the target proxy is selected, the proxy checks its URL map to decide which backend services are responsible for the request.

Finally, the backend service delegates the request to one of its backends (actual application instances). In our case, during normal operations, requests from Mr. Green, based in Seattle, will be processed by the US West backend instance. The traffic from Ms. Blue will go to the backend in the US East region.

If you wish to learn more about the global load balancer’s architecture, check out this documentation for an extensive overview of all its components.

Global Load Balancer Deployment

At last, matey, let’s go ahead and deploy an instance of the global external load balancer in Google Cloud.

Assuming that applications instances are already running across US West, Central, and East, these are our next steps (explore how to deploy the instances in Google Cloud):

1. Reserve a static public IP address for the load balancer:

gcloud compute addresses create load-balancer-public-ip \

--ip-version=IPV4 \

--network-tier=PREMIUM \

--global2. Configure a health check for a backend service:

gcloud compute health-checks create http load-balancer-http-basic-check \

--check-interval=20s --timeout=5s \

--healthy-threshold=2 --unhealthy-threshold=2 \

--request-path=/login \

--port 803. Create the backend service for the HTTP traffic and provide the just-created health check to ensure the application instances are up and running:

gcloud compute backend-services create load-balancer-backend-service \

--load-balancing-scheme=EXTERNAL_MANAGED \

--protocol=HTTP \

--port-name=http \

--health-checks=load-balancer-http-basic-check \

--global4. Provide the backend service with backends (application instances) across several cloud regions:

gcloud compute backend-services add-backend load-balancer-backend-service \

--balancing-mode=UTILIZATION \

--max-utilization=0.8 \

--capacity-scaler=1 \

--instance-group=ig-us-central \

--instance-group-zone=us-central1-b \

--global

gcloud compute backend-services add-backend load-balancer-backend-service \

--balancing-mode=UTILIZATION \

--max-utilization=0.8 \

--capacity-scaler=1 \

--instance-group=ig-us-east \

--instance-group-zone=us-east4-b \

--global

gcloud compute backend-services add-backend load-balancer-backend-service \

--balancing-mode=UTILIZATION \

--max-utilization=0.8 \

--capacity-scaler=1 \

--instance-group=ig-us-west \

--instance-group-zone=us-west2-b \

--global5. Finally, configure the default URL map to route all requests to the created backend service:

gcloud compute url-maps create load-balancer-url-map --default-service load-balancer-backend-serviceAfter the backend service is ready, go ahead and configure the frontend part of the load balancer which is the forwarding rule and target proxy.

1. Create an HTTP proxy providing the URL map:

gcloud compute target-http-proxies create load-balancer-http-frontend \

--url-map load-balancer-url-map \

--global2. Define a forwarding rule to direct the traffic from the public Internet to the just created proxy:

gcloud compute forwarding-rules create load-balancer-http-frontend-forwarding-rule \

--load-balancing-scheme=EXTERNAL_MANAGED \

--network-tier=PREMIUM \

--address=load-balancer-public-ip \

--global \

--target-http-proxy=load-balancer-http-frontend \

--ports=80That’s it! The global external load balancer is fully configured. It might just take a few minutes for your configuration to propagate worldwide.

Testing Load Balancer

Now, it’s testing time!

I’ll play the role of Ms. Blue, who wants to share gorgeous photos from Tampa Bay. A colleague of mine who is based in Seattle will be Mr. Green.

The manual testing is straightforward. I’m opening the messenger in my browser using the load balancer’s public IP address and uploading a few pictures:

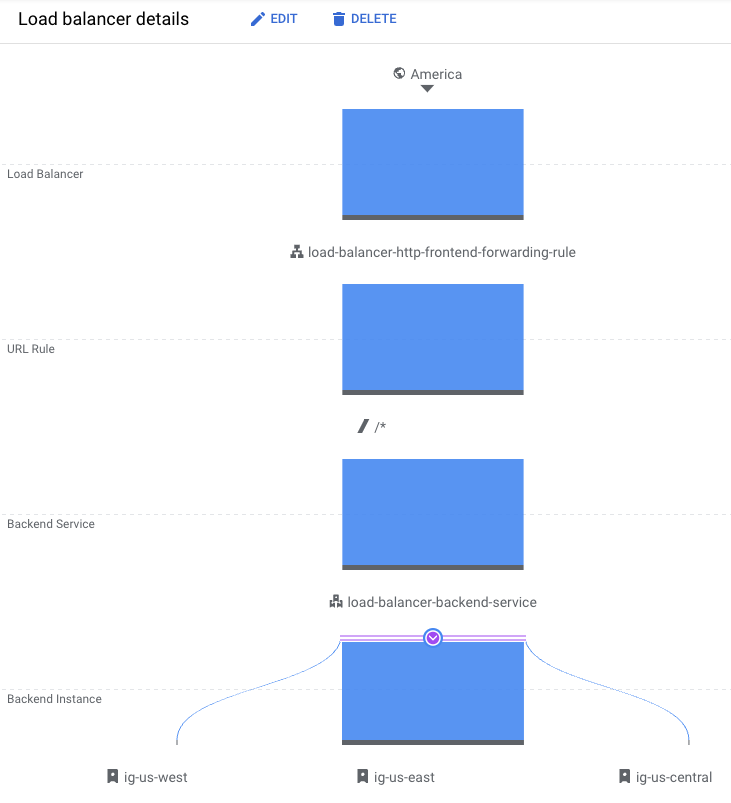

How do I ensure those pictures were uploaded through an instance in the US East?

Well, I opened the monitoring tab for the load balancer and confirmed all my requests are delegated to the ig-us-east instance:

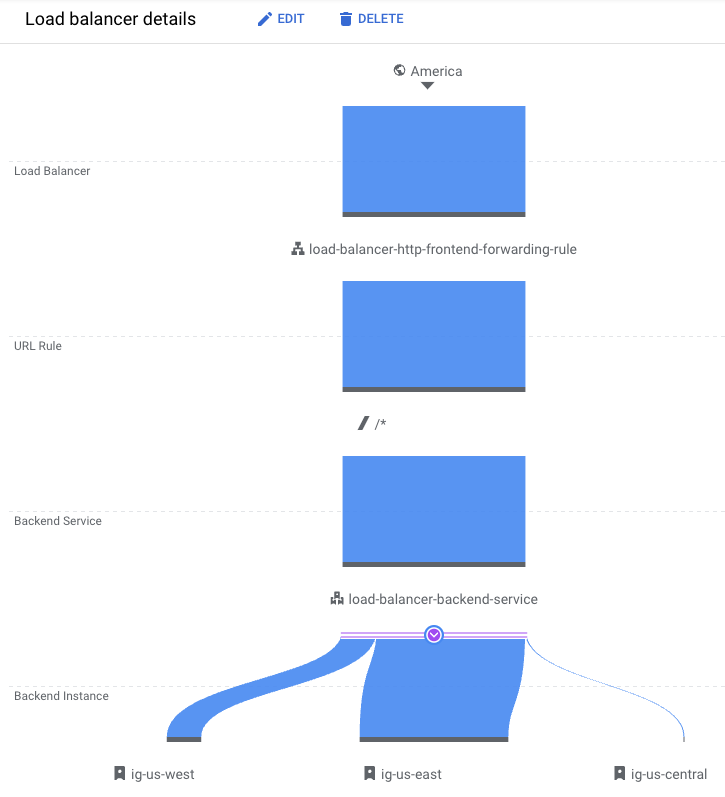

After that, my Seattle colleague opens the app to check the pictures. In a few moments, I can see that the load balancer directs his traffic to the ig-us-west!

Job done!

The global load balancer is fully configured and functions globally. This means my geo-distributed messenger can seamlessly process user requests with high performance across the globe and withstand various cloud outages.

What’s on the Horizon?

Alright, matey!

My geo-distributed app now functions across multiple cloud regions and availability zones. But the app, API layer, and database still run within the boundaries of the USA.

My next move would be to scale the messenger to other countries and continents and experiment with several YugabyteDB deployment options (my geo-distributed database).

Follow me to be notified as soon as the next update is published! And check out the previous articles in my geo-distributed messenger application development journey.

Opinions expressed by DZone contributors are their own.

Comments