Using Approximate Nearest Neighbor (ANN) Search With SingleStoreDB

In this article, we'll evaluate ANN Index Search with the new VECTOR data type using the Fashion MNIST dataset from Zalando.

Join the DZone community and get the full member experience.

Join For FreeThe new SingleStoreDB release v8.5 provides several new vector features. In this article, we'll evaluate ANN Index Search with the new VECTOR data type using the Fashion MNIST dataset from Zalando.

The notebook file and SQL code are available on GitHub.

Create a SingleStoreDB Cloud Account

A previous article showed the steps to create a free SingleStoreDB Cloud account. We'll use the following settings:

- Workspace Group Name: ANN Demo Group

- Cloud Provider: AWS

- Region: US East 1 (N. Virginia)

- Workspace Name: ann-demo

- Size: S-00

Create a Database and Tables

In our SingleStore Cloud account, let's use the SQL Editor to create a new database. Call this fmnist_db, as follows:

CREATE DATABASE IF NOT EXISTS fmnist_db;We'll also create several tables using the BLOB data type and new VECTOR data type, as follows:

USE fmnist_db;

CREATE TABLE IF NOT EXISTS train_data_blob (

idx INT(10) UNSIGNED NOT NULL,

label VARCHAR(20),

vector BLOB,

KEY(idx)

);

CREATE TABLE IF NOT EXISTS test_data_blob (

idx INT(10) UNSIGNED NOT NULL,

label VARCHAR(20),

vector BLOB,

KEY(idx)

);

CREATE TABLE IF NOT EXISTS train_data_vec (

idx INT(10) UNSIGNED NOT NULL,

label VARCHAR(20),

vector VECTOR(784) NOT NULL,

KEY(idx)

);

CREATE TABLE IF NOT EXISTS test_data_vec (

idx INT(10) UNSIGNED NOT NULL,

label VARCHAR(20),

vector VECTOR(784) NOT NULL,

KEY(idx)

);We have train and test tables using both formats. We'll load data into the two different sets of tables.

New Notebook

We'll follow the instructions to create a new notebook as described in a previous article. We'll call the notebook ann_demo.

Fill Out the Notebook

First, we'll install some libraries:

!pip install tensorflow --quiet

!pip install matplotlib --quietNext, let's set up our environment:

import os

os.environ["TF_CPP_MIN_LOG_LEVEL"] = "3"

from tensorflow import keras

from keras.datasets import fashion_mnist

import matplotlib.pyplot as plt

import numpy as npLoad the Dataset

We'll use the Fashion MNIST dataset from Zalando.

First, we'll get the train and test data:

(train_images, train_labels), (test_images, test_labels) = fashion_mnist.load_data()Let's take a look at the shape of the data:

print("train_images: " + str(train_images.shape))

print("train_labels: " + str(train_labels.shape))

print("test_images: " + str(test_images.shape))

print("test_labels: " + str(test_labels.shape))The result should be as follows:

train_images: (60000, 28, 28)

train_labels: (60000,)

test_images: (10000, 28, 28)

test_labels: (10000,)We have 60,000 images for training and 10,000 images for testing. The images are greyscaled, 28 pixels by 28 pixels, and we can take a look at one of these:

print(train_images[0])The result should be (28 columns by 28 rows):

[[ 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 0 0 0 0 1 0 0 13 73 0 0 1 4 0 0 0 0 1 1 0]

[ 0 0 0 0 0 0 0 0 0 0 0 0 3 0 36 136 127 62 54 0 0 0 1 3 4 0 0 3]

[ 0 0 0 0 0 0 0 0 0 0 0 0 6 0 102 204 176 134 144 123 23 0 0 0 0 12 10 0]

[ 0 0 0 0 0 0 0 0 0 0 0 0 0 0 155 236 207 178 107 156 161 109 64 23 77 130 72 15]

[ 0 0 0 0 0 0 0 0 0 0 0 1 0 69 207 223 218 216 216 163 127 121 122 146 141 88 172 66]

[ 0 0 0 0 0 0 0 0 0 1 1 1 0 200 232 232 233 229 223 223 215 213 164 127 123 196 229 0]

[ 0 0 0 0 0 0 0 0 0 0 0 0 0 183 225 216 223 228 235 227 224 222 224 221 223 245 173 0]

[ 0 0 0 0 0 0 0 0 0 0 0 0 0 193 228 218 213 198 180 212 210 211 213 223 220 243 202 0]

[ 0 0 0 0 0 0 0 0 0 1 3 0 12 219 220 212 218 192 169 227 208 218 224 212 226 197 209 52]

[ 0 0 0 0 0 0 0 0 0 0 6 0 99 244 222 220 218 203 198 221 215 213 222 220 245 119 167 56]

[ 0 0 0 0 0 0 0 0 0 4 0 0 55 236 228 230 228 240 232 213 218 223 234 217 217 209 92 0]

[ 0 0 1 4 6 7 2 0 0 0 0 0 237 226 217 223 222 219 222 221 216 223 229 215 218 255 77 0]

[ 0 3 0 0 0 0 0 0 0 62 145 204 228 207 213 221 218 208 211 218 224 223 219 215 224 244 159 0]

[ 0 0 0 0 18 44 82 107 189 228 220 222 217 226 200 205 211 230 224 234 176 188 250 248 233 238 215 0]

[ 0 57 187 208 224 221 224 208 204 214 208 209 200 159 245 193 206 223 255 255 221 234 221 211 220 232 246 0]

[ 3 202 228 224 221 211 211 214 205 205 205 220 240 80 150 255 229 221 188 154 191 210 204 209 222 228 225 0]

[ 98 233 198 210 222 229 229 234 249 220 194 215 217 241 65 73 106 117 168 219 221 215 217 223 223 224 229 29]

[ 75 204 212 204 193 205 211 225 216 185 197 206 198 213 240 195 227 245 239 223 218 212 209 222 220 221 230 67]

[ 48 203 183 194 213 197 185 190 194 192 202 214 219 221 220 236 225 216 199 206 186 181 177 172 181 205 206 115]

[ 0 122 219 193 179 171 183 196 204 210 213 207 211 210 200 196 194 191 195 191 198 192 176 156 167 177 210 92]

[ 0 0 74 189 212 191 175 172 175 181 185 188 189 188 193 198 204 209 210 210 211 188 188 194 192 216 170 0]

[ 2 0 0 0 66 200 222 237 239 242 246 243 244 221 220 193 191 179 182 182 181 176 166 168 99 58 0 0]

[ 0 0 0 0 0 0 0 40 61 44 72 41 35 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0]

[ 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0]]We can check the label associated with this image:

print(train_labels[0])The result should be:

9This value represents an Ankle Boot.

We can do a quick plot as follows:

classes = [

"t_shirt_top",

"trouser",

"pullover",

"dress",

"coat",

"sandal",

"shirt",

"sneaker",

"bag",

"ankle_boot"

]

num_classes = len(classes)

for i in range(num_classes):

ax = plt.subplot(2, 5, i + 1)

plt.imshow(

np.column_stack(train_images[i].reshape(1, 28, 28)),

cmap = plt.cm.binary

)

plt.axis("off")

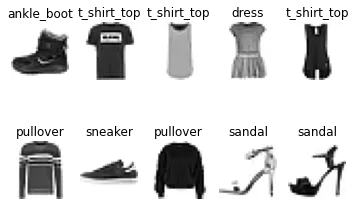

ax.set_title(classes[train_labels[i]])The result is shown in Figure 1.

Figure 1. Fashion MNIST.

Prepare Pandas Dataframe

We need to reshape our dataset so that we can store it correctly later:

train_images = train_images.reshape((train_images.shape[0], -1))

test_images = test_images.reshape((test_images.shape[0], -1))And we can check the shapes:

print("train_images: " + str(train_images.shape))

print("test_images: " + str(test_images.shape))The result should be:

train_images: (60000, 784)

test_images: (10000, 784)So, we have flattened the image structure.

Now we'll create two Pandas Dataframes, as follows:

import pandas as pd

train_data_df = pd.DataFrame([

(i,

image.astype(int).tolist(),

classes[int(label)],

) for i, (image, label) in enumerate(zip(train_images, train_labels))

], columns = ["idx", "img", "label"])

test_data_df = pd.DataFrame([

(i,

image.astype(int).tolist(),

classes[int(label)],

) for i, (image, label) in enumerate(zip(test_images, test_labels))

], columns = ["idx", "img", "label"])We need to convert the values in the img column to a suitable format for SingleStoreDB. We can do this using the following code:

import struct

def data_to_binary(data: list[float]):

format_string = "f" * len(data)

return struct.pack(format_string, *data)

train_data_df["vector"] = train_data_df["img"].apply(data_to_binary)

test_data_df["vector"] = test_data_df["img"].apply(data_to_binary)We can now drop the img column:

train_data_df.drop("img", axis = 1, inplace = True)

test_data_df.drop("img", axis = 1, inplace = True)Write Pandas Dataframes to SingleStoreDB

We are now ready to write the Dataframes train_data_df and test_data_df to the tables train_data_blob and test_data_blob, respectively.

First, we'll set up the connection to SingleStoreDB:

from sqlalchemy import *

db_connection = create_engine(connection_url)Finally, we are ready to write the Dataframes to SingleStoreDB. First, train_data_df:

train_data_df.to_sql(

"train_data_blob",

con = db_connection,

if_exists = "append",

index = False,

chunksize = 1000

)And then test_data_df:

test_data_df.to_sql(

"test_data_blob",

con = db_connection,

if_exists = "append",

index = False,

chunksize = 1000

)Example Queries

Now that we have built our system, we can run some queries using the SQL Editor.

Using the BLOB Type

First, let's create two variables:

SET @qv_train_blob = (

SELECT vector

FROM train_data_blob

WHERE idx = 30000

);

SET @qv_test_blob = (

SELECT vector

FROM test_data_blob

WHERE idx = 500

);In the first case, we are selecting an image vector 50% through the train data. In the second case, we are selecting an image vector 5% through the test data.

Now, let's use EUCLIDEAN_DISTANCE with the train data:

SELECT label, EUCLIDEAN_DISTANCE(vector, @qv_train_blob) AS score

FROM train_data_blob

ORDER BY score

LIMIT 5;The result should be:

+-------+-------------------+

| label | score |

+-------+-------------------+

| dress | 0 |

| dress | 570.5322076798119 |

| dress | 612.5422434412177 |

| dress | 653.6390441214478 |

| dress | 665.1052548281363 |

+-------+-------------------+Next, let's try the same query but use the test data:

SELECT label, EUCLIDEAN_DISTANCE(vector, @qv_test_blob) AS score

FROM train_data_blob

ORDER BY score

LIMIT 5;The result should be:

+----------+--------------------+

| label | score |

+----------+--------------------+

| pullover | 1211.59399140141 |

| pullover | 1295.9332544541019 |

| pullover | 1316.508640305866 |

| pullover | 1320.24278070361 |

| pullover | 1346.3539653449236 |

+----------+--------------------+Using the VECTOR Type

First, we'll copy the data from the tables using the BLOB type to the tables using the VECTOR type, as follows:

INSERT INTO train_data_vec (idx, label, vector) (

SELECT idx, label, vector

FROM train_data_blob

);

INSERT INTO test_data_vec (idx, label, vector) (

SELECT idx, label, vector

FROM test_data_blob

);Next, we'll define an index as follows:

ALTER TABLE train_data_vec ADD VECTOR INDEX (vector)

INDEX_OPTIONS '{

"index_type":"IVF_FLAT",

"nlist":1000,

"metric_type":"EUCLIDEAN_DISTANCE"

}';Many vector indexing options are available. Please see the Vector Indexing documentation.

First, let's create two variables:

SET @qv_train_vec = (

SELECT vector

FROM train_data_vec

WHERE idx = 30000

);

SET @qv_test_vec = (

SELECT vector

FROM test_data_vec

WHERE idx = 500

);In the first case, we are selecting an image vector 50% through the train data. In the second case, we are selecting an image vector of 5% through the test data.

Now, let's use the Infix Operator <-> with the train data:

SELECT label, vector <-> @qv_train_vec AS score

FROM train_data_vec

ORDER BY score

LIMIT 5;The result should be:

+-------+-------------------+

| label | score |

+-------+-------------------+

| dress | 0 |

| dress | 570.5322076798119 |

| dress | 612.5422434412177 |

| dress | 653.6390441214478 |

| dress | 665.1052548281363 |

+-------+-------------------+Next, let's try the same query but use the test data:

SELECT label, vector <-> @qv_test_vec AS score

FROM train_data_vec

ORDER BY score

LIMIT 5;The result should be:

+----------+--------------------+

| label | score |

+----------+--------------------+

| pullover | 1211.59399140141 |

| pullover | 1295.9332544541019 |

| pullover | 1316.508640305866 |

| pullover | 1320.24278070361 |

| pullover | 1346.3539653449236 |

+----------+--------------------+Comparing the results, we can see that both approaches work well. However, the new ANN Index Search provides many benefits, as discussed in the Vector Indexing documentation.

Summary

In this short article, we've seen how to create an ANN Index using the new VECTOR data type with a well-known dataset. We've seen that the existing approach to storing vectors in SingleStoreDB using the BLOB type works well, but using the new vector features offers greater flexibility and choices.

Published at DZone with permission of Akmal Chaudhri. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments