How to Train a Joint Entities and Relation Extraction Classifier Using BERT Transformer With spaCy 3

Joint NER and relation extraction will open up a new way of information retrieval through knowledge graphs, where you can navigate across different nodes to discover hidden relationships.

Join the DZone community and get the full member experience.

Join For FreeIntroduction

One of the most useful applications of NLP technology is information extraction from unstructured texts — contracts, financial documents, healthcare records, etc. — that enables automatic data query to derive new insights. Traditionally, named entity recognition has been widely used to identify entities inside a text and store the data for advanced querying and filtering. However, if we want to semantically understand the unstructured text, NER alone is not enough since we don't know how the entities are related to each other. Performing joint NER and relation extraction will open up a whole new way of information retrieval through knowledge graphs where you can navigate across different nodes to discover hidden relationships. Therefore, performing these tasks jointly will be beneficial.

Building on my previous article where we fine-tuned a BERT model for NER using spaCy 3, we will now add relation extraction to the pipeline using the new Thinc library from spaCy. We train the relation extraction model following the steps outlined in spaCy's documentation. We will compare the performance of the relation classifier using transformers and tok2vec algorithms. Finally, we will test the model on a job description found online.

Relation Classification

At its core, the relation extraction model is a classifier that predicts a relation r for a given pair of entities {e1, e2}. In the case of transformers, this classifier is added on top of the output hidden states. For more information about relation extraction, please read this excellent article outlining the theory of fine-tuning transformer model for relation classification.

The pre-trained model that we are going to fine-tune is the roberta-base model but you can use any pre-trained model available in the Hugging Face library by simply inputting the name in the config file (see below).

In this tutorial, we are going to extract the relationship between the two entities {Experience, Skills} as Experience_in and between {Diploma, Diploma_major} as Degree_in. The goal is to extract the years of experience required in specific skills and the diploma major associated with the required diploma. You can, of course, train your own relation classifier for your own use cases such as finding the cause/effect of symptoms in health records or company acquisitions in financial documents. The possibilities are limitless…

In this tutorial, we will only cover the entity relation extraction part. For fine-tuning BERT NER using spaCy 3, please refer to my previous article.

Data Annotation

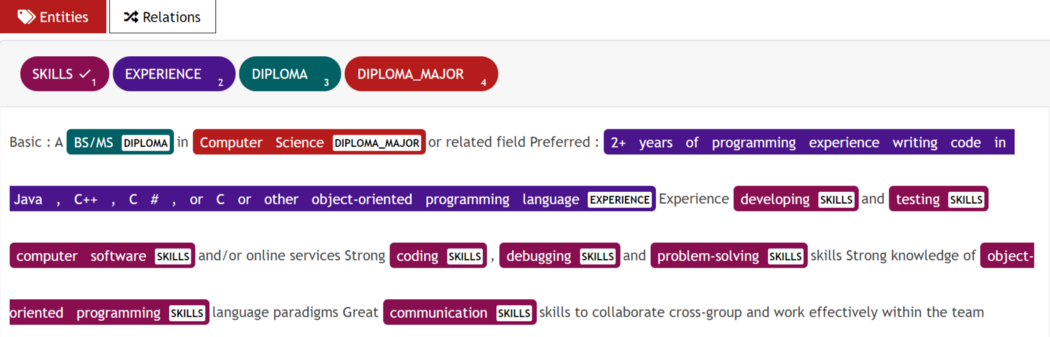

As in my previous article, we use the UBIAI text annotation tool to perform the joint entity and relation annotation because of its versatile interface that allows us to switch between entity and relation annotation easily (see below):

For this tutorial, I have only annotated around 100 documents containing entities and relations. For production, we will certainly need more annotated data.

Data Preparation

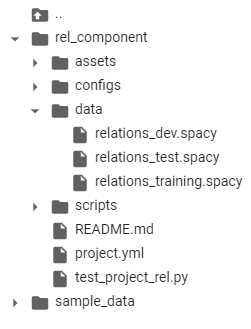

Before we train the model, we need to convert our annotated data to a binary spacy file. We first split the annotation generated from UBIAI into training/dev/test and save them separately. We modify the code that is provided in spaCy's tutorial repo to create the binary file for our own annotation (conversion code).

We repeat this step for the training, dev, and test dataset to generate three binary spacy files (files available in Github).

Relation Extraction Model Training

For training, we will provide the entities from our golden corpus and train the classifier on these entities.

- Open a new Google Colab project and make sure to select GPU as hardware accelerator in the notebook settings. Make sure GPU is enabled by running:

!nvidia-smi. - Install

spacy-nightly:

!pip install -U spacy-nightly --pre

- Install the

wheelpackage and clone spacy's relation extraction repo:

!pip install -U pip setuptools wheel

!python -m spacy project clone tutorials/rel_component

- Install transformer pipeline and

spacy transformerslibrary:

!python -m spacy download en_core_web_trf

!pip install -U spacy transformers

- Change directory to rel_component folder:

cd rel_component. - Create a folder with the name “data” inside rel_component and upload the training, dev, and test binary files into it:

- Open project.yml file and update the training, dev, and test path:

train_file: "data/relations_training.spacy"dev_file: "data/relations_dev.spacy"test_file: "data/relations_test.spacy"

- You can change the pre-trained transformer model (if you want to use a different language, for example), by going to the configs/rel_trf.cfg and entering the name of the model:

[components.transformer.model]@architectures = "spacy-transformers.TransformerModel.v1"name = "roberta-base" # Transformer model from huggingfacetokenizer_config = {"use_fast": true}

- Before we start the training, we will decrease the

max_lengthin configs/rel_trf.cfg from the default 100 token to 20 to increase the efficiency of our model. The max_length corresponds to the maximum distance between two entities above which they will not be considered for relation classification. As a result, two entities from the same document will be classified, as long as they are within a maximum distance (in number of tokens) of each other.

[components.relation_extractor.model.create_instance_tensor.get_instances]@misc = "rel_instance_generator.v1"max_length = 20

- We are finally ready to train and evaluate the relation extraction model; just run the commands below:

!spacy project run train_gpu # command to train transformers

!spacy project run evaluate # command to evaluate on test dataset

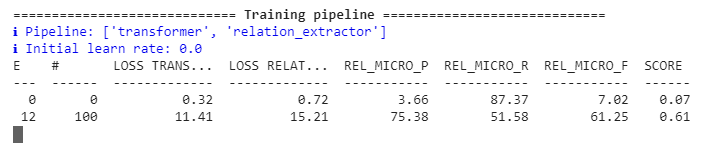

You should start seeing the P, R, and F scores getting updated:

After the model is done training, the evaluation on the test data set will immediately start and display the predicted versus golden labels. The model will be saved in a folder named “training” along with the scores of our model.

To train the non-transformer model tok2vec, run the following command instead:

!spacy project run train_cpu # command to train train tok2vec

!spacy project run evaluate

We can compare the performance of the two models:

# Transformer model "performance":{"rel_micro_p":0.8476190476,"rel_micro_r":0.9468085106,"rel_micro_f":0.8944723618,} # Tok2vec model "performance":{"rel_micro_p":0.8604651163,"rel_micro_r":0.7872340426,"rel_micro_f":0.8222222222,}

The transformer-based model's precision and recall scores are significantly better than tok2vec and demonstrate the usefulness of transformers when dealing with a low amount of annotated data.

Joint Entity and Relation Extraction Pipeline

Assuming that we have already trained a transformer NER model as in my previous post, we will extract entities from a job description found online (that was not part of the training nor the dev set) and feed them to the relation extraction model to classify the relationship.

- Install spacy transformers and transformer pipeline.

- Load the NER model and extract entities:

import spacynlp = spacy.load("NER Model Repo/model-best")Text=['''2+ years of non-internship professional software development experience Programming experience with at least one modern language such as Java, C++, or C# including object-oriented design.1+ years of experience contributing to the architecture and design (architecture, design patterns, reliability and scaling) of new and current systems.Bachelor / MS Degree in Computer Science. Preferably a PhD in data science.8+ years of professional experience in software development. 2+ years of experience in project management.Experience in mentoring junior software engineers to improve their skills, and make them more effective, product software engineers.Experience in data structures, algorithm design, complexity analysis, object-oriented design.3+ years experience in at least one modern programming language such as Java, Scala, Python, C++, C#Experience in professional software engineering practices & best practices for the full software development life cycle, including coding standards, code reviews, source control management, build processes, testing, and operationsExperience in communicating with users, other technical teams, and management to collect requirements, describe software product features, and technical designs.Experience with building complex software systems that have been successfully delivered to customersProven ability to take a project from scoping requirements through actual launch of the project, with experience in the subsequent operation of the system in production''']for doc in nlp.pipe(text, disable=["tagger"]): print(f"spans: {[(e.start, e.text, e.label_) for e in doc.ents]}")

- We print the extracted entities:

spans: [(0, '2+ years', 'EXPERIENCE'), (7, 'professional software development', 'SKILLS'), (12, 'Programming', 'SKILLS'), (22, 'Java', 'SKILLS'), (24, 'C++', 'SKILLS'), (27, 'C#', 'SKILLS'), (30, 'object-oriented design', 'SKILLS'), (36, '1+ years', 'EXPERIENCE'), (41, 'contributing to the', 'SKILLS'), (46, 'design', 'SKILLS'), (48, 'architecture', 'SKILLS'), (50, 'design patterns', 'SKILLS'), (55, 'scaling', 'SKILLS'), (60, 'current systems', 'SKILLS'), (64, 'Bachelor', 'DIPLOMA'), (68, 'Computer Science', 'DIPLOMA_MAJOR'), (75, '8+ years', 'EXPERIENCE'), (82, 'software development', 'SKILLS'), (88, 'mentoring junior software engineers', 'SKILLS'), (103, 'product software engineers', 'SKILLS'), (110, 'data structures', 'SKILLS'), (113, 'algorithm design', 'SKILLS'), (116, 'complexity analysis', 'SKILLS'), (119, 'object-oriented design', 'SKILLS'), (135, 'Java', 'SKILLS'), (137, 'Scala', 'SKILLS'), (139, 'Python', 'SKILLS'), (141, 'C++', 'SKILLS'), (143, 'C#', 'SKILLS'), (148, 'professional software engineering', 'SKILLS'), (151, 'practices', 'SKILLS'), (153, 'best practices', 'SKILLS'), (158, 'software development', 'SKILLS'), (164, 'coding', 'SKILLS'), (167, 'code reviews', 'SKILLS'), (170, 'source control management', 'SKILLS'), (174, 'build processes', 'SKILLS'), (177, 'testing', 'SKILLS'), (180, 'operations', 'SKILLS'), (184, 'communicating', 'SKILLS'), (193, 'management', 'SKILLS'), (199, 'software product', 'SKILLS'), (204, 'technical designs', 'SKILLS'), (210, 'building complex software systems', 'SKILLS'), (229, 'scoping requirements', 'SKILLS')]

We have successfully extracted all the skills, number of years of experience, diploma, and diploma major from the text! Next, we load the relation extraction model and classify the relationship between the entities.

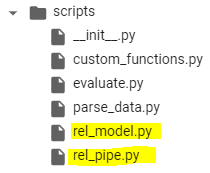

Note: Make sure to copy rel_pipe and rel_model from the scripts folder into your main folder:

import randomimport typerfrom pathlib import Pathimport spacyfrom spacy.tokens import DocBin, Docfrom spacy.training.example import Examplefrom rel_pipe import make_relation_extractor, score_relationsfrom rel_model import create_relation_model, create_classification_layer, create_instances, create_tensors# We load the relation extraction (REL) modelnlp2 = spacy.load("training/model-best")# We take the entities generated from the NER pipeline and input them to the REL pipelinefor name, proc in nlp2.pipeline: doc = proc(doc)# Here, we split the paragraph into sentences and apply the relation extraction for each pair of entities found in each sentence.for value, rel_dict in doc._.rel.items(): for sent in doc.sents: for e in sent.ents: for b in sent.ents: if e.start == value[0] and b.start == value[1]: if rel_dict['EXPERIENCE_IN'] >=0.9 : print(f" entities: {e.text, b.text} --> predicted relation: {rel_dict}")

Here we display all the entities having a relationship Experience_in with a confidence score higher than 90%:

"entities":("2+ years", "professional software development"") --> predicted relation": {"DEGREE_IN":1.2778723e-07,"EXPERIENCE_IN":0.9694631}"entities":"(""1+ years", "contributing to the"") --> predicted relation": {"DEGREE_IN":1.4581254e-07,"EXPERIENCE_IN":0.9205434}"entities":"(""1+ years","design"") --> predicted relation": {"DEGREE_IN":1.8895419e-07,"EXPERIENCE_IN":0.94121873}"entities":"(""1+ years","architecture"") --> predicted relation": {"DEGREE_IN":1.9635708e-07,"EXPERIENCE_IN":0.9399484}"entities":"(""1+ years","design patterns"") --> predicted relation": {"DEGREE_IN":1.9823732e-07,"EXPERIENCE_IN":0.9423302}"entities":"(""1+ years", "scaling"") --> predicted relation": {"DEGREE_IN":1.892173e-07,"EXPERIENCE_IN":0.96628445}entities: ('2+ years', 'project management') --> predicted relation: {'DEGREE_IN': 5.175297e-07, 'EXPERIENCE_IN': 0.9911635}"entities":"(""8+ years","software development"") --> predicted relation": {"DEGREE_IN":4.914319e-08,"EXPERIENCE_IN":0.994812}"entities":"(""3+ years","Java"") --> predicted relation": {"DEGREE_IN":9.288566e-08,"EXPERIENCE_IN":0.99975795}"entities":"(""3+ years","Scala"") --> predicted relation": {"DEGREE_IN":2.8477e-07,"EXPERIENCE_IN":0.99982494}"entities":"(""3+ years","Python"") --> predicted relation": {"DEGREE_IN":3.3149718e-07,"EXPERIENCE_IN":0.9998517}"entities":"(""3+ years","C++"") --> predicted relation": {"DEGREE_IN":2.2569053e-07,"EXPERIENCE_IN":0.99986637}

Remarkably, we were able to extract almost all the years of experience along with their respective skills correctly with no false positives or negatives!

Let's look at the entities having relationship Degree_in:

entities: ('Bachelor / MS', 'Computer Science') -->

predicted relation:

{'DEGREE_IN': 0.9943974, 'EXPERIENCE_IN':1.8361954e-09} entities: ('PhD', 'data science') --> predicted relation: {'DEGREE_IN': 0.98883855, 'EXPERIENCE_IN': 5.2092592e-09}

Again, we successfully extracted all the relationships between diploma and diploma major!

This again demonstrates how easy it is to fine-tune transformer models to your own domain-specific case with a low amount of annotated data, whether it is for NER or relation extraction.

With only a hundred annotated documents, we were able to train a relation classifier with good performance. Furthermore, we can use this initial model to auto-annotate hundreds more of unlabeled data with minimal correction. This can significantly speed up the annotation process and improve model performance.

Conclusion

Transformers have truly transformed the domain of NLP and I am particularly excited about their application in information extraction. I would like to give a shoutout to Explosion AI (spaCy developers) and Hugging Face for providing open-source solutions that facilitate the adoption of transformers.

If you need data annotation for your project, don't hesitate to try out the UBIAI annotation tool. We provide numerous programmable labeling solutions (such as ML auto-annotation, regular expressions, dictionaries, etc…) to minimize hand annotation.

Lastly, check out this article to learn how to leverage the NER and relation extraction models to build knowledge graphs and extract new insights.

If you have any comments, please leave a note below!

Opinions expressed by DZone contributors are their own.

Comments