Kubernetes Architecture Diagram

This article will explain each Kubernetes architecture example step, the entire structure, what it’s used for, and how to use it.

Join the DZone community and get the full member experience.

Join For FreeIT Clicked to me just this morning. Do you know what the Kubernetes architecture diagram is? How about Kubectl, microservices, serverless computing, Kubernetes, AWS, containers, Fluentd, pods, nodes, or scaling?

Nowadays, there are plenty of computing terms you’ve probably heard at some point in life, but you might not be able to Click your head around IT all just yet.

Even if you’re familiar with all these terms, do you understand the context they’re used for?

If you understand everything I’ve mentioned so far, you may consider reading further as you’re about to learn so much more. But, if you’re not familiar with the mentioned terms, sit back and go with the flow of my words.

You’re in for a bumpy ride as I’m about to dissolve the Kubernetes topic to atoms and build it back up step-by-step so you’d understand the Kubernetes architecture diagram, each Kubernetes architecture example, the entire structure, what it’s used for, and how to use it.

Therefore, let’s Click right into IT!

What Is Kubernetes?

A Kubernetes cluster architecture consists of a primary (control) plane and one or more nodes (worker machines). Alternatively, it could be even more if you utilize Kubernetes self-managed services like kubeadmn, kops, etc.

Both instances can be in the cloud, in the form of virtual machines, or even physical devices. However, when it comes to managed Kubernetes architecture environments like Azure AKS, GCP GKE, and AWS EKS, the management of the control plane is done by the designated cloud provider.

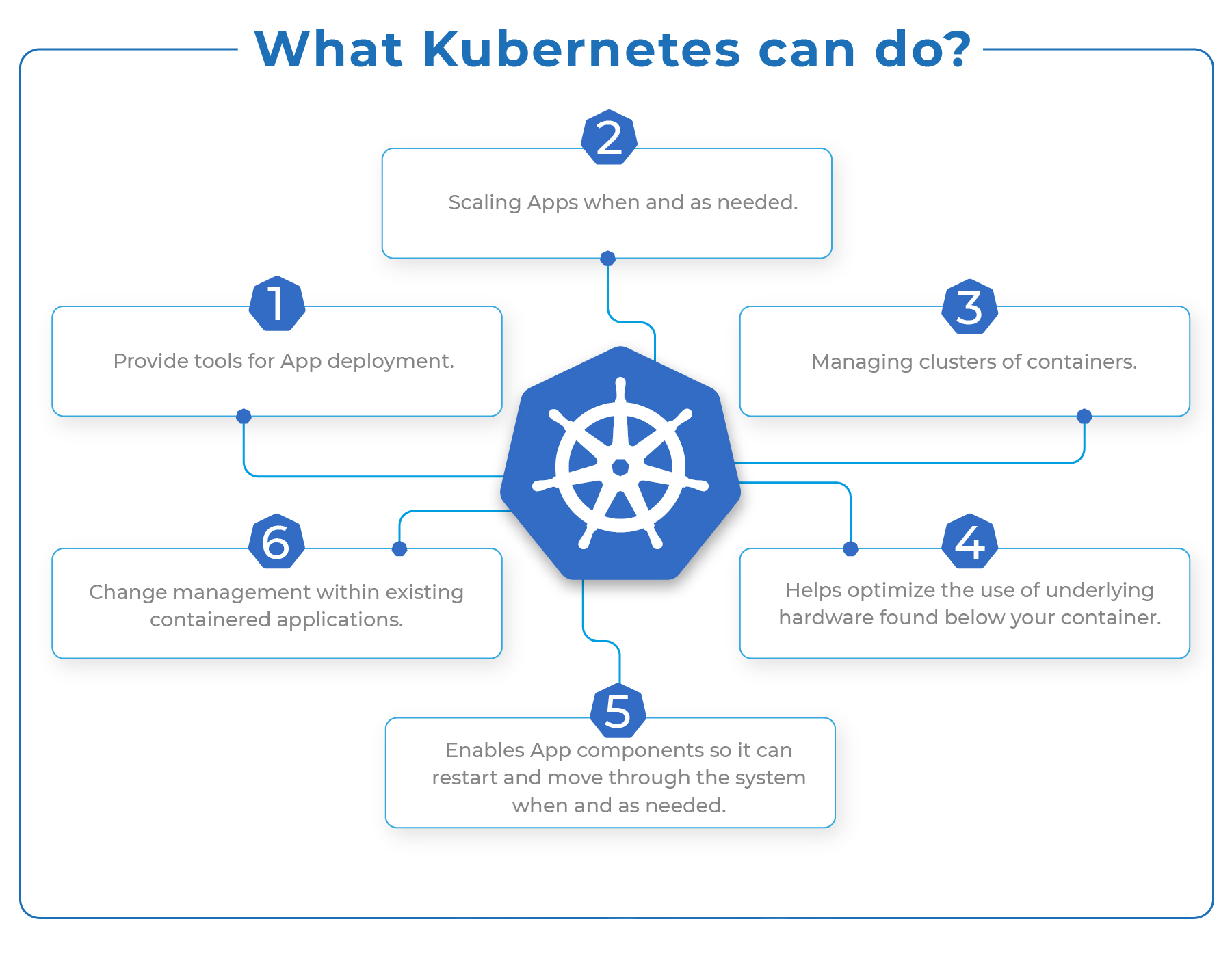

Now, when large-scale enterprises wish to perform mission-critical tasks, they’ll use Kubernetes, as it’s an open-source system for container management and a perfect solution for their needs. Why is Kubernetes so great? Here is what it can do:

Besides its basic framework capabilities, Kubernetes is helpful on other fronts. It allows users to choose from various options like languages, logging and monitoring tools, type of application frameworks, along with numerous other valuable tools users may require.

Kubernetes is not a Platform as a Service (PaaS) per se, but you can use it as a complete PaaS starting base.

Ever since Kubernetes showed up in the market, it has become a pretty popular tool and one of today’s most successful open-source platforms.

Why Do We Need Kubernetes?

The sole Kubernetes purpose is hosting your apps in an automated fashion and in the form of containers. This allows you to deploy as many instances of your applications as you need, but that’s not all. You can also enable accessible communication between all the services found in your application.

It sounds incredible; you might be thinking, “is there anything Kubernetes can’t do?” and you wouldn’t be wrong to think that way.

We need Kubernetes simply because it allows us to distribute our workloads across all available resources efficiently, but it also allows us to optimize infrastructure costs. Moreover, Kubernetes has several aces like scalability, high availability, portability, and security.

Scalability

All applications deployed within Kubernetes are known as microservices, and they are composed of numerous containers further grouped into series as pods. From here, every container is logically designed to perform a singular task.

High Availability

Almost all container orchestration engines can deliver application availability. However, Kubernetes’ high availability architecture exists to achieve the availability of both infrastructure and applications. It also ensures high availability on the application front by utilizing replication controllers, pet sets, and replica sets.

Kubernetes ensures high availability using replication controllers, replica sets, and pet sets on the application front. In addition, users can set the minimum number of running pods at any moment.

Additionally, Kubernetes (High Availability) supports infrastructure availability, including a wide range of storage backends. These include block storage devices like Amazon Elastic Block Store (EBS), Google Compute Engine persistent disk, etc.

Also, it supports distributed file systems like NFS, GlusterFSand, and specialized container storage plugins like Flocker.

Portability

Kubernetes’ design offers various choices in operating systems, container runtimes, cloud platforms, PaaS, and processor architectures. In addition, you can configure a Kubernetes cluster on different Linux distributions like CentOs, Debian, Fedora, CoreOS, Ubuntu, and Red Hat Linux.

You can deploy it to run in a local or virtual environment based on KVM, libvirt, and vSphere.

Serverless architecture for Kubernetes is capable of running on cloud platforms like Google Cloud, Azure, and AWS. Still, you can also create a hybrid cloud if you mix and match clusters across cloud providers or on-premises.

Security

When it comes to Kubernetes security, the application architecture is securely configured at multiple levels.

Kubernetes Advantages

The main Kubernetes advantages are vast, and here’s what Kubernetes has to offer:

Automatic Bin packing

Kubernetes will automatically package your application and create container scheduling based on all available resources and requirements without sacrificing availability. As a result, Kubernetes will balance between best effort and critical workloads to save unused resources and ensure complete utilization.

Load Balancing and Service Discovery

Kubernetes provides peace of mind regarding networking and communication since it automatically assigns IP addresses to containers. In addition, for a set of containers, it gives a single DNS name that will load-balance traffic within the cluster.

Storage Orchestration

Kubernetes allows you to choose the system storage you want to mount. You can opt for public cloud providers like AWS, GCP, or even local storage. Moreover, you can use shared networks storage systems like iSCSI, NFS, etc.

Self-Healing

Kubernetes is capable of an automatic restart of all containers that fail during execution. In addition, it will kill all containers that don’t respond to health checks previously defined by the user. Finally, if the node dies, it will reschedule and replace all failed containers in all other available nodes.

Secret and Configuration Management

Kubernetes can assist you with updates and deployment of secrets and application configuration without rebuilding your image and exposing the secrets within the stack configuration.

Batch Execution

Besides managing services, Kubernetes can handle your batch and CI workloads, which will replace failed containers if need be

Horizontal Scaling

Kubernetes requires a single command to scale up the containers, but it can also scale them down with CLI. You can perform scaling via the Dashboard found in Kubernetes UI.

Automatic Rollbacks and Rollouts

Kubernetes can progressively roll out updates and changes to your app or its configuration. If something goes wrong, Kubernetes can and will roll back the change.

These were some of the most critical advantages of the Kubernetes architecture diagram, but that’s not all Kubernetes offers. Therefore, let’s get deeper into more attractive aspects of Kubernetes and its practical use cases.

Kubernetes and the Adoption in the Enterprises and Companies

You’d be surprised to learn that over 25,000 companies use Kubernetes cluster architecture. Most companies that use Kubernetes are from the USA, working CS (Computer Software) industry.

According to Enlyft, the Kubernetes data usage and the data regarding Kubernetes usage statistics in the last five years is more transparent.

Enterprises using Kubernetes have $U 1 million to $10 million in revenue and 10-50 employees. These companies are small-sized businesses, making up 38% of enterprises that use Kubernetes.

Additionally, 43% of companies that use Kubernetes are mid-sized companies with 50-1000 employees. Finally, 19% of businesses using Kubernetes architecture are large companies with over 1000 employees.

Some famous companies that utilize Kubernetes in their workflow include Shopify, Google, Udemy, Slack, etc.

Why do these companies use Kubernetes? Here’s why.

Reasons Behind Running Kubernetes On-Premises

It became a huge deal when enterprises implemented the Kubernetes architecture diagram in their data centers over the alternative (public cloud providers). It’s only natural that these several essential factors were crucial for companies to decide on Kubernetes on-premises strategy implementation:

Business Policy

Every business has specific business policy requirements, like the need for running workloads at accurately specified geographical locations. If you consider the specific set of policy needs, you’ll understand why it might be difficult for a business to utilize public clouds.

In addition, some companies may not accept offers from various public cloud providers if the mentioned business has strict business policies regarding their competition.

Avoid Lock-ins

Numerous enterprises want to avoid using services from one cloud provider because they may want to deploy their apps throughout multiple clouds. This includes an on-premises (private) cloud. As a result, businesses will reduce the risk of perpetual impacts due to certain cloud providers’ issues.

Moreover, this gives companies the opportunity to negotiate much better prices with cloud providers.

Cost

At scale, running your apps in public clouds can be costly, and cost-efficiency is probably the most important reason for using Kubernetes on-premises.

In addition, if your apps rely on processing and ingesting large amounts of data, you can expect to pay top dollar to run them in the public cloud environment.

On the other hand, utilizing “in-house” Kubernetes will significantly reduce operational costs thanks to the existing data center.

Data Privacy and Compliance

Some organizations have specified regulations regarding data privacy and compliance issues. For example, these rules may prevent companies from serving their customers in different world regions if their services are nested in specific public clouds.

You will effectively modernize your apps into a cloud-native format with your own data centers. You will significantly transform your business if you were to opt-out for Kubernetes on-premises. An effective strategy like this will help you save up a lot of money while undoubtedly improving the use of infrastructure.

Why Do You Need Containers to Work With Kubernetes?

Companies that want to containerize their apps have to use containers on a huge scale as they don’t use one or two containers but dozens and even 100’s to ensure high availability and load balance the traffic.

What happens next is they have to scale up container numbers. When traffic increases every second, the ‘scale-up’ process is necessary to service the ‘n’ number of requests. Alternatively, with low demand, you should scale down container numbers.

Honestly, even if this is possible, you can do this only after a lot of manual effort that involves the management of those containers. Therefore, the question that roams through my head is if all this is worth the trouble. Will automated intervention make your life easier and save you hours of manual labor? It undoubtedly will!

For this reason, container management tools are crucial. There are numerous famous container orchestration and management tools available, but Kubernetes is the leader in the market. The reasons behind its popularity are its unbeatable functionality and the fact that it’s a Google product.

Therefore, a good reason for choosing Kubernetes is container auto-scaling based on traffic needs.

Kubernetes Architecture and Its Components

Components of The Kubernetes architecture diagram are nodes (set of machines) and the control plane. Now, let’s get deeper into those components.

What Is the Master Node in Kubernetes?

Master Node is the starting point for all administrative tasks, and its responsibility is managing the Kubernetes cluster architecture.

It’s possible to have more than one master node within the cluster, and what’s required for checking the fault tolerance as more master nodes will place the system in the mode known as “High Availability.”

However, one master node has the role of the main node that performs all the tasks.

Master Node Components

Kubernetes API server

- API server inside the master node is where all the administrative tasks will be performed.

- REST commands then go to the API server that will process and validate the requests.

- Upon request, the cluster’s resulting state will be stored based on the distributed key value.

Scheduler

- This component schedules the tasks to specified slave nodes. Besides, each slave node will store resource usage information.

- Scheduler will schedule all the work in the form of Services and Pods.

- Before the task is scheduled, the scheduler will consider the service requirements quality, affinity, anti-affinity, data locality, etc.

Control Manager

- Control manager is known as a controller, and it’s a daemon that adjusts the Kubernetes cluster. Kubernetes cluster serves the purpose of managing various non-terminating control loops.

- There are other things this component does, like node garbage collection, event garbage collection, cascading-deletion garbage collection. Moreover, it has lifecycle functions like namespace creation.

- In essence, a controller looks over the desired state of a managed object, but it also uses an API server to overlook and manage its current state. If the desired state of an object is not met, the control loop will ensure to level the current and desired state by taking specific steps to achieve this goal.

ETCD

- This component distributes a key-value store that ultimately uses a cluster state for storing.

- You can configure ETCD externally or even make it a part of the Kubernetes Master.

- “Go” programming language is the one people use to write ETCD. In Kubernetes, you can store configuration details like Secrets, ConfigMaps, subnets, etc., and store the cluster state.

Kubernetes Architecture on a High Level

When talking about a high level, the Kubernetes architecture consists of several segments like the control plane (master node), several Kubelets (cluster nodes), and ETCD (distributed storage system that helps keep a consistent cluster state).

Where Does The Control Plane Go in All This?

The control plane is a specific system that perpetually manages objects states, helps match the actual and desired state of system objects, and responds to any changes within the cluster.

The control plane consists of three essential components – kKubescheduler, kKubeapiserver, and kKubecontroller-manager. These components will run via a single master node and can even replicate throughout several master nodes (high availability).

Kube-Scheduler

- A component overlooks freshly created Pods that don’t have an assigned node and chooses which node they’ll run.

- Scheduling decisions are based on several factors, including data locality, inter-workload interference, software/hardware/policy constraints, resource requirements (individual and collective), affinity and anti-affinity specs, and deadlines.

Kube-Apiserver

- The kKubeapiserver component serves as the main implementation component of the API server. Additionally, it scales by deploying additional instances (horizontal scaling). It’s possible to run multiple instances and to balance traffic between them.

- The API server exposes the Kubernetes API, and it’s the front-end component for the Kubernetes control plane.

Kube-Controller-Manager

Even though every controller is an isolated process, you can merge multiple controllers into a single binary, but it will run as a single process for complexity reduction purposes.

These are some controller types:

- Node controller – notices and responds in a situation when nodes are down.

- Job controller – monitors for Job objects (one-off tasks) and creates Pods that complete the tasks.

- Endpoints controller – fills Endpoints object (merges Pods and Services).

- Token controllers and Service Account – creates default accounts and API access tokens required for new namespaces.

Worker Node Architecture

Worker Node runs apps via Pods, and Master Node controls the Pods. Pods are scheduled on a physical server (slave node). So when you want to access the apps from an external environment, you’ll have to connect to these nodes.

Worker Node Components

1. Container Runtime

- Worker Node requires a container runtime to manage and run the container’s lifecycle.

- Docker is often confused as the container runtime, but it’s a platform that utilizes containers in this manner.

2. Kubelet

- Kubelet communicates with Master Node and executes on worker nodes. It obtains Pod specs via API server. Furthermore, it executes the associated containers depicted in healthy and actively running Pods.

3. cAdvisor

- cAdvisor serves to analyze all the metrics for network usage, file, CPU, and memory for every container that runs on a specified node. You should find a good monitoring tool, as cAdvisor doesn’t have a long-term storing solution to offer.

- You don’t have to take specific steps to install cAdvisor as it integrates the kubelet binary.

4. Quick Kubelet Workflow Diagram

A more practical solution is to present you with an illustrated diagram of Kubelet workflow so you’d understand better how it works. You’ll see a detailed but quick presentation of the Kubelet workflow below.

5. Kube-Proxy

- Kube-proxy runs on every node, and it works with every host sub-netting separately to ensure that external parties have access to all the services.

- It also plays a role of a load balancer and network proxy for any service located on a worker node. In addition, Kubee-proxy will manage the network routing for UDP and TCP packets.

- A network proxy runs on every worker node and follows the API server for each Service endpoint (deletion/creation).

- For Kube-proxy to reach Service endpoints, it creates different routes.

Kubernetes Concepts, Tools, Deployment, and Other Important Elements

ETCD

It’s always fun to go deep under the surface of the Kubernetes architecture diagram, and ETCD is a crucial element of a great Kubernetes architecture example. Kubernetes stores all cluster state information in ETCD and is known as the sole stateful element of the control plane.

ETCD is highly consistent, allowing it to be the anchor coordination point. In addition, thanks to the Raft consensus algorithm, ETCD is highly available.

Another excellent feature of ETCD is that it has the capability to stream changes to clients. This helps all Kubernetes cluster components to be in sync.

Kubectl

This is a command-line tool for Kubernetes, and it helps you run commands. In addition, Kubectl is beneficial for managing and inspecting cluster resources, viewing logs, and application deployment.

Kubectl gives users the ability to control access to perform any Kubernetes operation. From a more technical standpoint, kubectl is Kubernetes API’s client.

Kubernetes Networking

The “IP-per-pod” model is how Kubernetes operates. This means that every pod is assigned an IP address. Moreover, containers located in a single pod will share the same IP address and network namespaces.

Networking Kubernetes has a unique networking model meant for pod-to-pod and cluster-wide networking.

Usually, the CNI (Container Network Interface) will use an overlay network to conceal the pod’s underlying network by utilizing VXLAN (traffic encapsulation). Moreover, it can utilize other solutions that are fully routed. Whichever solution it uses, a cluster-wide pod network is where pods will communicate, and CNI providers manage this communication.

There are no restrictions within a pod, so containers can communicate between themselves because when in a pod, containers share the same IP address and network namespace.

What does all this mean? First, it means containers’ communication is done via localhost. Secondly, communication between pods is possible thanks to the pod IP address.

Storage in Kubernetes

Kubernetes is based on the volumes concept, and in essence, volume is a directory that possibly contains some data that a pod can access. However, the use of a specific volume type determines its content, selects the medium that backs up this directory, as well as how this directory came to be in the first place.

Any container in a pod is capable of consuming storage in the same pod. Initially, storage will survive when pods restart, but what will happen after the pod has been deleted depends on the storage type.

Various available options will allow you to mount block storage and file storage to a pod, and the most popular ones are cloud storage services like gcePersistentDisk and AWS EBS. Alternatively, physical storage like iSCSI, Flocker, NFS, CephFS, and glusterFS are also options to consider.

Moreover, an administrator can provide you with a PersistentVolumes (PVs) storage solution. PVs are cluster-wide objects that link further to the backing storage provider, allowing you to consume these resources. So what PV does is tie into an already existing storage resource.

A PersistentVolumeClaim will create a request for storage consumption for each pod under the same namespace. However, it can have different states or phases depending on usage. These states are known as available, bound, released, and failed.

In the end, StorageClasses are an abstract layer that allows you to see the quality difference of underlying storage. Furthermore, operators use StorageClasses to depict different storage types, which provides storage with dynamic provisioning based on all incoming claims from every pod.

Kubernetes Concepts

For the Kubernetes architecture diagram to be practical, you must understand various abstractions that this architecture utilizes to represent the state within the Kubernetes system.

- Pod – a single application controlled by one or several containers. A pod contains a unique network ID, application containers, and storage resources to determine how it’ll run containers.

- Service – pods can easily suffer a change. Therefore, Kubernetes can’t assure that a physical pod will remain alive (if the replication controller ends and begins with new pods).

Instead, the service will display a logical set of pods, but it will also play the part of a gateway. This means that you won’t have to keep track of pods that make up the service, as pods will be able to send requests to the service.

- NameSpace – is a virtual cluster that works in environments with multiple users across numerous projects. It’s worth mentioning that one physical cluster can run several virtual clusters simultaneously.

Resources within a namespace have to be unique, and they won’t be granted access to another namespace. Moreover, it’s possible to allocate a resource quota to a namespace so you can avoid overconsumption of overall resources found in the physical cluster.

- Volume – in Kubernetes, the volume will apply to a whole pod. Therefore, it’ll mount on all containers located in the specified pod. Even if the container restarts, Kubernetes can guarantee that all the data will be saved. However, if the pod is killed, the volume will disappear as well. A pod can have numerous volumes of different types.

- Deployment – this concept depicts the pod’s desired state or its replica set, usually in a yaml file. Until the current and expected state match, as specified within the deployment file, the deployment controller will slowly update the environment. This environment update includes deleting or creating replicas.

What the yaml file does is define two replicas for each pod. However, when only one of them is running, the yaml file definition will also create another one. Therefore, it’s essential to know that they shouldn’t be directly manipulated when deployment manages replicas. Use new deployments instead.

Kubernetes Supervisord

Managing and creating processes is what supervisors primarily does. However, the starting point of these processes is the data within its configuration file. If you wonder how a supervisor does this, the answer is simple – it creates subprocesses.

Supervisord will manage every subprocess it creates as long as the subprocess is alive. That’s why supervisors is referred to as a parental process for its “offspring” of subprocesses.

Fluentd

Fluentd is a trendy open-source data collector that you can set up on your Kubernetes nodes. You’ll quickly transform and filter the log data and follow up on container log files upon setting it up. You’ll be able to deliver them to the Elasticsearch cluster for indexing and storing the data.

- JSON Unified Logging – Fluentd will try to structure data as JSON whenever it’s possible. This is important for processing log data, as Fluentd will unify them. The processing log data includes filtering, collecting logs output across several destinations and sources, and buffering.

- Pluggable Architecture – the community is capable of extending functionality thanks to a flexible plugin system that Fluentd has. In addition, these plugins will connect multiple data outputs and data sources. Fluentd plugins are beneficial as they allow for much better and more straightforward log usage.

- Built-in Reliability – since this data collector uses file-based buffering and supports memory, it can prevent you from losing valuable data within an inter-node. Moreover, you can set it up for high availability, but keep in mind that it also does excellent against tough failover.

- Minimum Resources Required – this open-source collector takes up a minimal amount of your system resources as it’s written in a combination of Ruby and C language.

Kubernetes Deployment

Deployment provides Kubernetes with instructions for modifying or creating pod instances that carry a containerized app. Deployments can achieve numerous goals, like enabling the rollout of updated code within a controlled environment, scaling the replica pod numbers, and rolling back the code to the previous deployment version in case you need to roll it back.

Deployment Benefits

The most meaningful benefit that Kubernetes deployment brought to us is the automation system regarding various repetitive functions (scaling, updating in-production applications, deploying).

Additionally, the automatic pod instances launching mechanism provides you with a piece of mind since now you can rest assured your instances will run as intended and across all nodes within the cluster. In essence, the more automation you have, the better. You’ll also experience fewer errors even though your deployments are much faster.

Kubernetes deployment can bypass nodes that went down or even replace a pod that failed, thanks to ongoing health and performance monitoring of nodes and pods. By doing so, the deployment controller can easily replace pods with the ultimate goal of ensuring seamless work for all vital applications.

Conclusion

Kubernetes architecture diagram is not an easy system to understand at first, but when you do, your life and time at work will become so much easier.

We’ve learned that Kubernetes proved to be an excellent solution for scaling, supporting diverse and decoupled stateful and stateless workloads, and providing automated rollbacks and rollouts. Still, it’s also a fantastic platform that allows you to orchestrate your applications (container-based).

Today we went together through a lot of information. I just hope that you have a much better understanding of Kubernetes and what it is, and how it works. If there’s anything, you may want to know that we haven’t discussed yet, contact us, and a professional from our team will help you with any questions you have.

Published at DZone with permission of Alfonso Valdes. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments