Automating Java Application Deployment Across Multiple Cloud Regions

Was I able to deploy several geo-messenger instances across multiple cloud regions? The geo-distributed messenger application development journey continues.

Join the DZone community and get the full member experience.

Join For FreeAhoy, matey!

If you want to read back over my progress up to this point, the links to the previous articles detailing my journey are at the end of this article.

"What’s good about habits?" I hear you ask.

Well, some habits are better (and more socially acceptable) than others! In terms of coding, a habit or routine can help you progress more quickly toward your end goal. It’s better to take daily baby steps than wait for that “perfect moment.”

I developed the habit of coding the geo-distributed messenger at least two days a week (strictly during business hours, as evenings are for my mischievous sons and dear wife!). So, here is the latest summary, for those of you who are following my development journey.

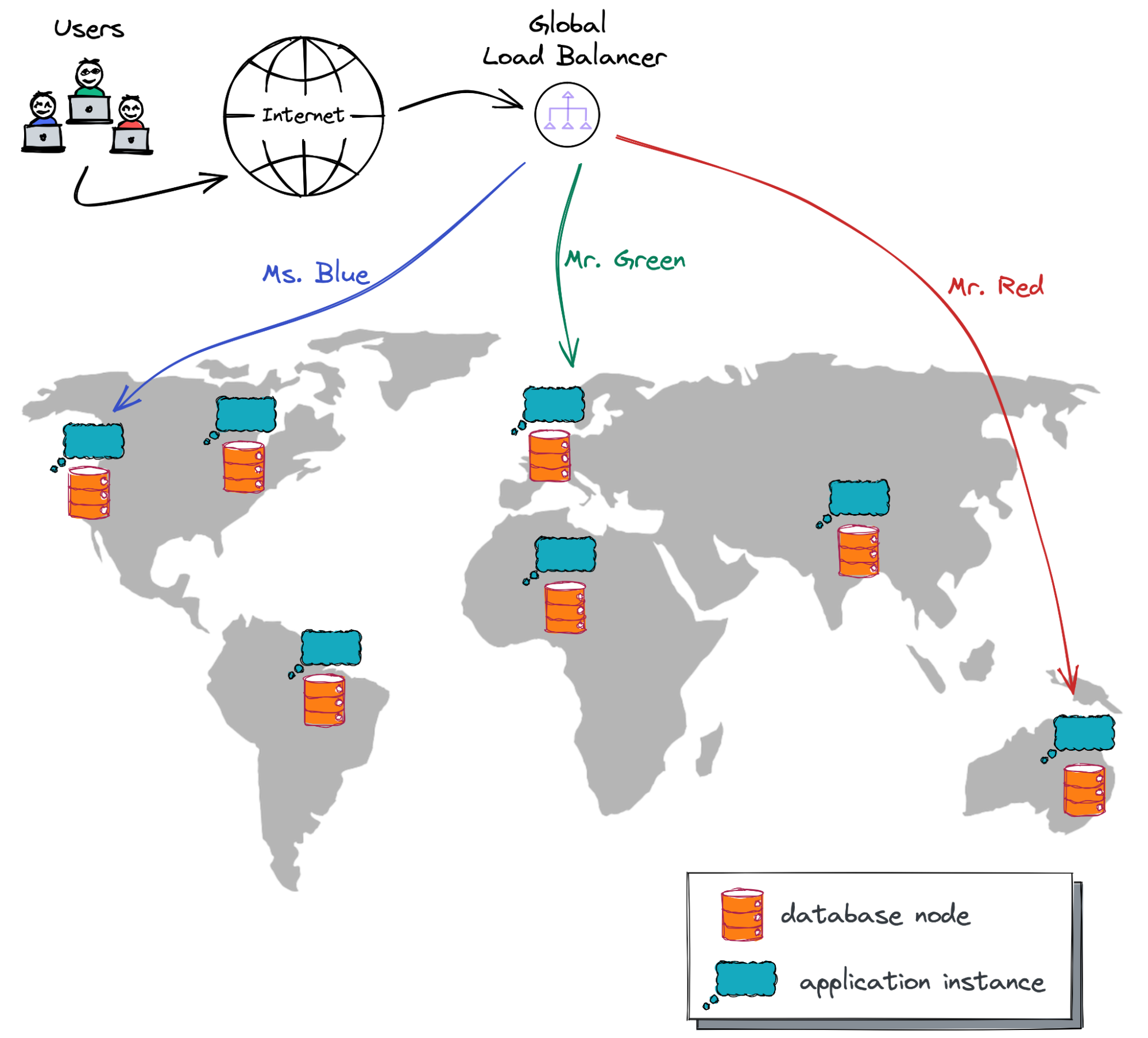

This is what my end goal looks like:

My geo-messenger has to function across the globe, storing data and serving user requests from multiple cloud regions.

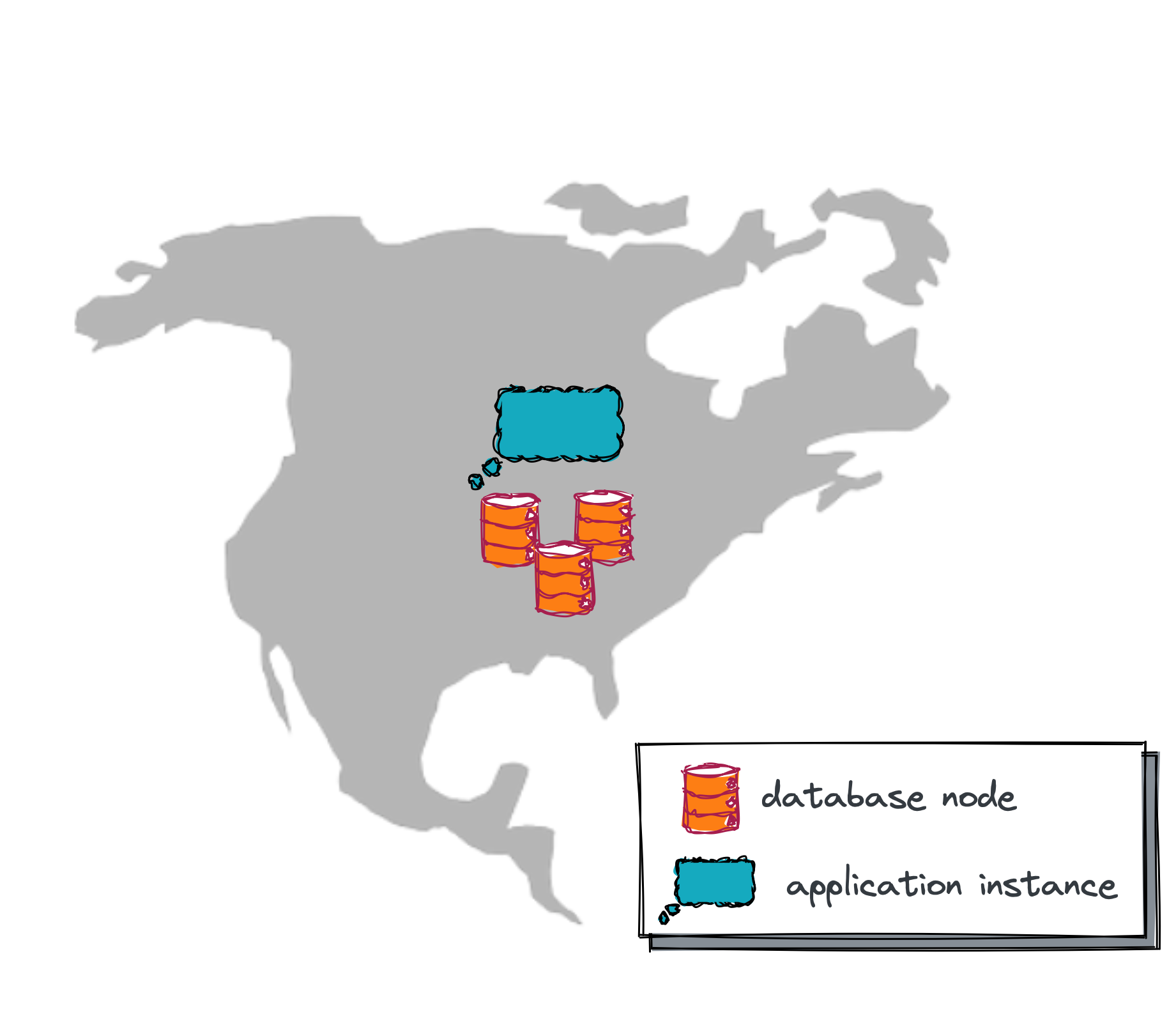

So how far have I progressed since the beginning of the project? Well, a week ago, my application looked like this:

The app ran in Heroku and used a multi-node YugabyteDB database cluster. The app instance and database were deployed in a single cloud region and could tolerate zone-level outages.

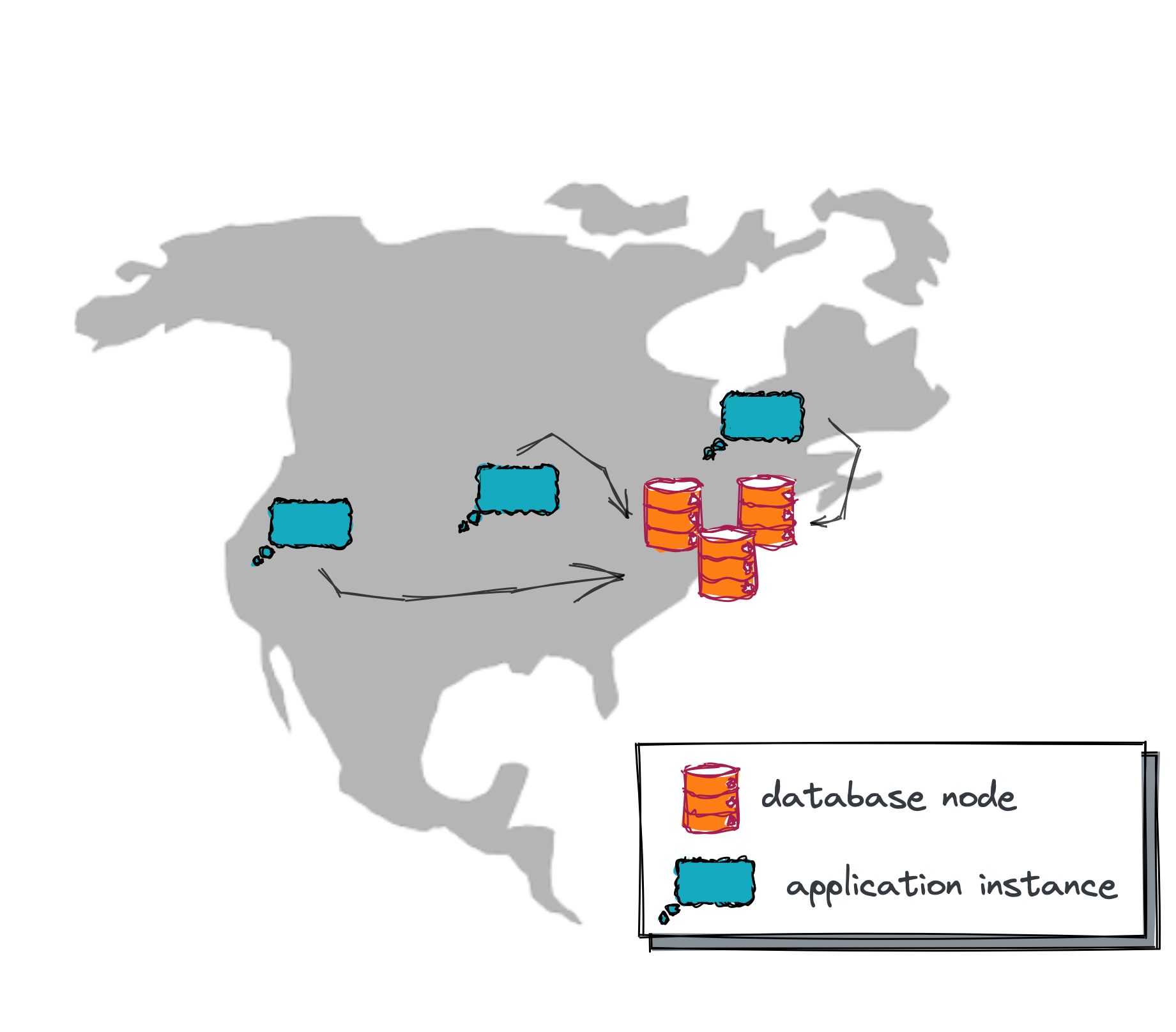

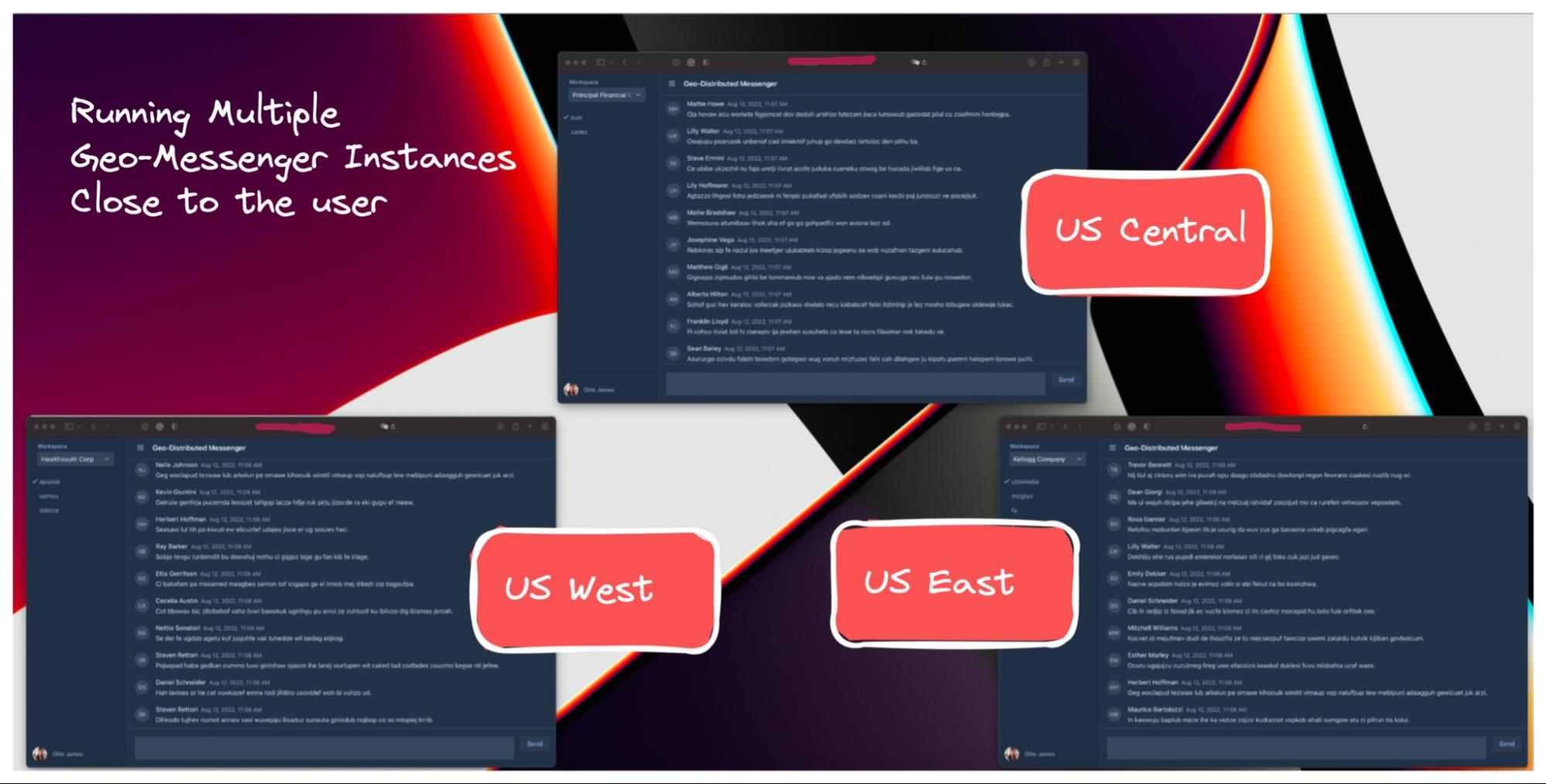

What happened a few days ago? Well, I continued taking baby steps toward my end goal and managed to deploy several geo-messenger instances across multiple cloud regions. So, now my app looks like this:

Several app instances run in the US West, Central, and East regions. I can use any of them to serve user traffic. In the case of regional outages, one instance could go down, but the availability of other instances won’t be disrupted. However, the database still works out of a single cloud region and is vulnerable to region-level incidents, but I’ll handle this later.

So, if you’re still with me on this journey, then, as the pirates used to say, “All Hand Hoy!” which means, “Everyone on deck!”

Let’s review the various connectivity options that I validated with my app. I’ll show you how I deploy those instances across several regions of Google Cloud. As a spoiler, I’ll give you advance notice that I don’t use Google Console (UI).

Creating Google Cloud Project

All of the resources used by my application instances are located in a dedicated Google Cloud project.

Speaking of the deployment automation approach, try to guess what I selected so far:

“Terraform?” No!

“Maybe an Ansible script?” Nope, wrong again.

“Come on, tell me that you at least deploy the app in Kubernetes!” Sorry, matey, nothing like that.

In fact, I chose gcloud CLI, which allows me to create a project, configure the network, and provision VMs via easy-to-follow commands. This is how I began to create my project for the geo-messenger:

gcloud projects create geo-distributed-messenger --name="Geo-Distributed Messenger"

Call me old-fashioned, but I don’t need to worry about Terraform, Ansible, and K8 yet. My priority is to get my app working across geographies in Google Cloud, so I want to go with the fastest approach. Once I reach my initial end goal, I can set another goal to support AWS, Azure, and Oracle Cloud, then use Terraform and other technologies to make the deployment cloud-agnostic.

Defining Firewall Rules

Whenever you create a new project in Google Cloud, you get the default virtual private cloud (VPC). The virtual machines (VMs) that will host my application instances will run within that VPC.

I created a firewall rule to allow SSH, HTTP, and HTTPS traffic:

gcloud compute --project=geo-distributed-messenger firewall-rules create geo-messenger-allowed-traffic \

--direction=INGRESS --priority=1000 --network=default \

--action=ALLOW --rules=tcp:22,tcp:80,tcp:443 \

--source-ranges=0.0.0.0/0 --target-tags=geo-messenger-instanceThe rule will apply to all the VMs labeled geo-messenger-instance. The other parameters are self-explanatory.

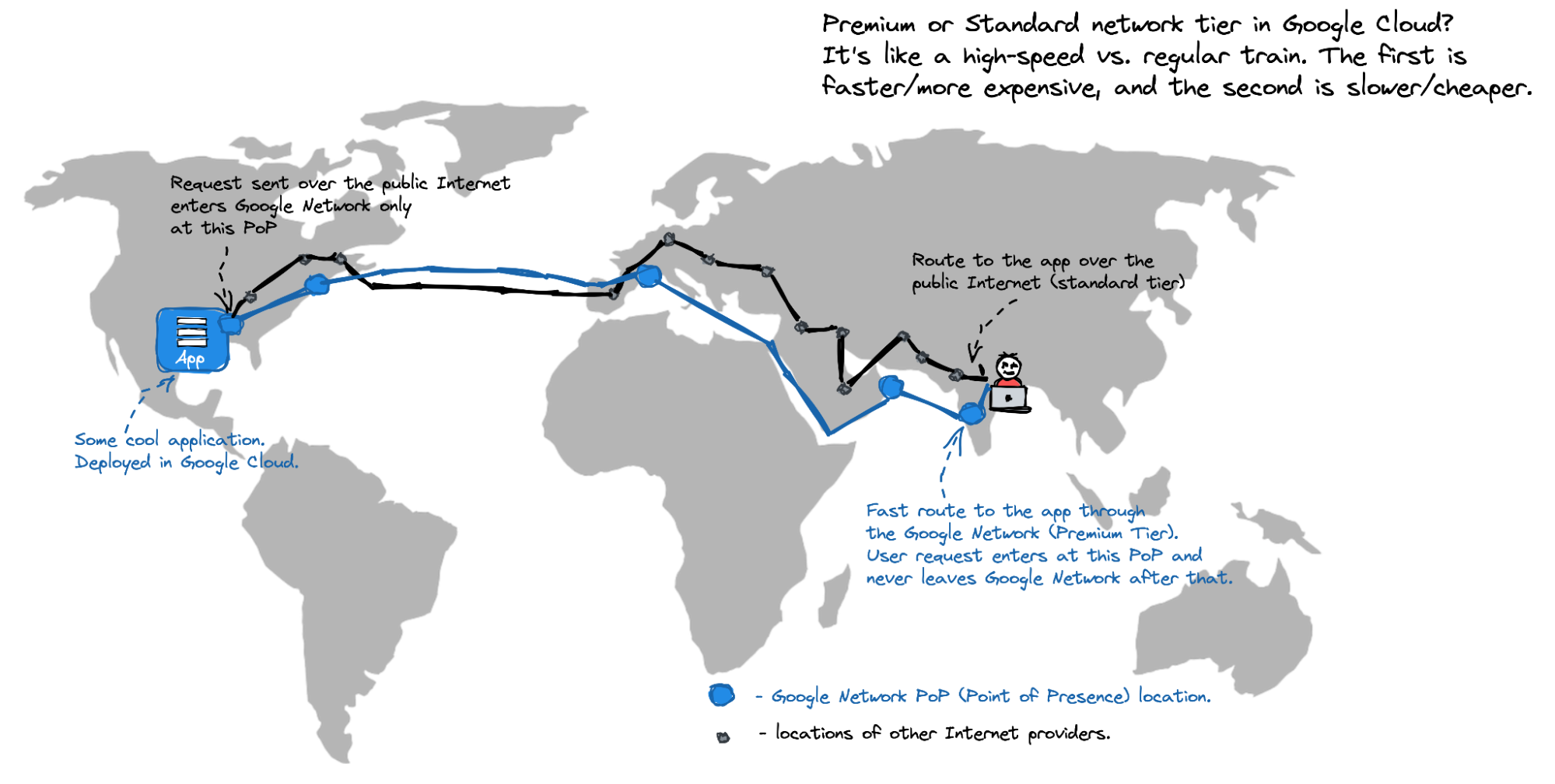

By the way, the default network is the Premium one. How is this different from the Standard Tier? Check out this illustration:

For my multi-region app, I need the premium network so that user traffic enters the Google global network at the nearest point-of-presence (PoP) and gets to my app instance fast.

Deploying the First App Instance

A gcloud command for the VM provisioning comes with more parameters than the two reviewed above. So, I put together a start_single_app_instance.sh script that starts a VM:

./start_single_app_instance.sh \

-n {INSTANCE_NAME} \

-z {CLOUD_ZONE_NAME} \

-a {APP_HTTP_PORT_NUMBER} \

-c "{DATABASE_CONNECTION_ENDPOINT}" \

-u {DATABASE_USER} \

-p {DATABASE_PWD}As an example, this is how I start a VM in the US West and connect it to my YugabyteDB cluster in the US East:

./start_single_app_instance.sh \

-n messenger-us-west-instance \

-z us-west2-a \

-a 80 \

-c "jdbc:postgresql://us-east1.my-instance-id.gcp.ybdb.io:5433/yugabyte?ssl=true&sslmode=require" \

-u my-user-name \

-p my-super-complex-passwordAfter the VM is booted, it will execute the startup script that installs the required software and launches a geo-messenger instance on port 80.

Deploying More Instances

The previous step was the most time-consuming. I had to recall how to write bash scripts and advance my knowledge about the gcloud API; but once I got the script for app instances deployment, it only took a few seconds to start the app instances in other geographic locations.

I used this command to launch an instance in the US Central region:

./start_single_app_instance.sh \

-n messenger-us-central-instance \

-z us-central1-a \

-a 80 \

-c "jdbc:postgresql://us-east1.my-instance-id.gcp.ybdb.io:5433/yugabyte?ssl=true&sslmode=require" \

-u my-user-name \

-p my-super-complex-passwordThis one launched an instance in the US East region, near Washington, DC:

./start_single_app_instance.sh \

-n messenger-us-east-instance \

-z us-east4-a \

-a 80 \

-c "jdbc:postgresql://us-east1.my-instance-id.gcp.ybdb.io:5433/yugabyte?ssl=true&sslmode=require" \

-u my-user-name \

-p my-super-complex-passwordFinally, I confirmed that every instance could serve the user traffic from port 80 using…my own hands and the browser:

What’s on the Horizon?

Alright, matey! Now I’ve got my app working across multiple cloud regions, what’s next?

In my next blog, I’ll talk about setting up the Global Cloud Load Balancer to intercept user requests at the nearest PoP (point-of-presence) and then route them to one of my application instances. I’ll also provision a multi-region YugabyteDB cluster so that my app can withstand region-level outages.

Follow me to be notified as soon as the next update is published!

Previous posts in this series are available below:

Opinions expressed by DZone contributors are their own.

Comments