Applications of Word Embeddings in NLP

In this post, we look at some of the practical uses of Word Embeddings (Word2Vec) and Domain Adaptation.

Join the DZone community and get the full member experience.

Join For Freeword embeddings are basically a form of word representation that bridges the human understanding of language to that of a machine. word embeddings are distributed representations of text in an n-dimensional space. these are essential for solving most nlp problems.

domain adaptation is a technique that allows machine learning and transfer learning models to map niche datasets that are all written in the same language but are still linguistically different. for example, legal documents, customer survey responses, and news articles are all unique datasets that need to be analyzed differently. one of the tasks of the common spam filtering problem involves adopting a model from one user (the source distribution) to a new one who receives significantly different emails (the target distribution).

the importance of word embeddings in the field of deep learning becomes evident by looking at the number of researches in the field. one such research in the field of word embeddings conducted by google led to the development of a group of related algorithms commonly referred to as word2vec.

word2vec one of the most used forms of word embedding is described by wikipedia as:

"word2vec takes as its input a large corpus of text and produces a vector space, typically of several hundred dimensions, with each unique word in the corpus being assigned a corresponding vector in the space. word vectors are positioned in the vector space such that words that share common contexts in the corpus are located in close proximity to one another in the space."

in this post, we look at some of the practical uses of word embeddings (word2vec) and domain adaptation. we also look at the technical aspects of word2vec to get a better understanding.

analyzing survey responses

word2vec can be used to get actionable metrics from thousands of customers reviews. businesses don't have enough time and tools to analyze survey responses and act on them thereon. this leads to loss of roi and brand value.

word embeddings prove invaluable in such cases. vector representation of words trained on (or adapted to) survey data-sets can help embed complex relationship between the responses being reviewed and the specific context within which the response was made. machine learning algorithms can leverage this information to identify actionable insights for your business/product.

analyzing verbatim comments

machine learning with the help of word embeddings has made great headway in the domain of analysis of verbatim comments. such analyses are very important for customer-centric enterprises.

when you're analyzing text data, an important use case is analyzing verbatim comments. in such cases, the data scientist is tasked with creating an algorithm that can mine customers' comment or review.

word embeddings like word2vec are essential for such machine learning tasks. vector representations of words trained on customer comments and reviews can help map out the complex relations between the different verbatim comments and reviews being analyzed. word embeddings like word2vec also help in figuring out the specific context in which a particular comment was made. such algorithms prove very valuable in understanding the buyer or customer sentiment towards a particular business or social forum.

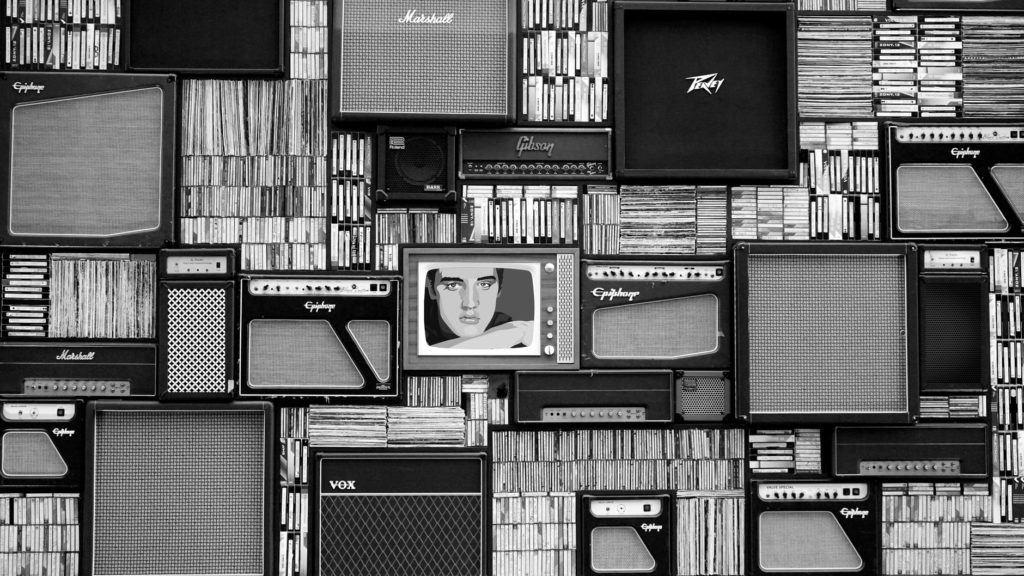

music/video recommendation system

the way we experience content has been revolutionized by streaming services available across the internet. in the past, recommendations focused on presenting you with content for future use. the modern streaming platforms instead focus on recommending content that can and will be enjoyed at the moment. the streaming models bring to the table new methods of discovery in the form of personalized radio and recommended playlists. the focus here is on generating sequences of songs that gel. to enhance user-experience the recommendation system's model should capture not only what songs similar people generally interested in , but also what songs are listened to frequently together in very similar contexts .

such models make use of word2vec . the algorithm interprets a user's listening queue as a sentence with each song considered as a word in the sentence. when a word2vec model is trained on a such a dataset what we mean is that each song that the user has listened to in the past and the song one is listening to at present somehow belong to the same context. word2vec accurately represents each song with a vector of coordinates that maps the context in which the song or the video is played.

for those of you who want to delve into the technical aspects of how word2vec works, here is what paralleldots's in-house experts view the subject.

technical aspect of word embeddings

a common practice in nlp is the use of pre-trained vector representations of words, also known as embeddings, for all sorts of down-stream tasks. intuitively, these word embeddings represent implicit relationships between words that are useful when training on data that can benefit from contextual information.

consider the example of the word2vec skip-gram model by mikolov et al. - one of the two most popular methods of training word embeddings (the other being glove). the authors pose an analogical reasoning problem which essentially requires asking the question: "germany is to berlin as france is to ___?". when you consider each of these words as a vector, the answer to the given problem is simply given by the formula

vec ("berlin") - vec ("germany") = x - vec ("france")

that is, the distance between the sets of vectors must be equal. therefore,

x = vec ("berlin") - vec ("germany") + vec ("france")

given that the vector representations are learned correctly, the required word is given by the vector closest to the point obtained. another implication of this is that words with similar semantic and/or syntactic meanings will group together.

retrofitting

while general-purpose datasets often benefit from the use of these pre-trained word embeddings, the representations may not always transfer well to specialized domains. this is because the embeddings have been trained on massive text corpus created from wikipedia and similar sources.

for example, the word python means something else in the everyday context, but it means something else entirely in the context of computer programming. these differences become even more relevant when you are building models to analyze context critical data such as in medical and legal notes.

one solution is to simply train the glove or skip-gram models on the domain-specific datasets, but in many cases sufficiently large datasets are not readily available to obtain practically relevant/meaningful representations.

the goal of retrofitting is to take readily available pre-trained word vectors and adapt them to your new domain data. the resulting representations of words are arguably more context-aware than the pre-trained word embeddings.

Published at DZone with permission of Shashank Gupta, DZone MVB. See the original article here.

Opinions expressed by DZone contributors are their own.

Comments